The Azure PostgreSQL Workshop

Welcome to the Azure PostgreSQL Workshop — a hands-on, scenario-driven lab that takes you from zero to confidently operating Azure Database for PostgreSQL Flexible Server in production.

You will deploy infrastructure with Bicep, load realistic data, deliberately break things, observe the damage through monitoring, diagnose root causes, and fix them — the same cycle you follow in real operations.

This is a two-day workshop. We’ll move at a comfortable pace with plenty of time for questions, discussion, and troubleshooting. Whether you’re coming from an Oracle, SQL Server, or cloud-native background — please ask questions as we go. There are no silly questions, and the best workshops are the ones where everyone participates!

Who Is This For?

- Developers building applications on Azure PostgreSQL who want to understand the platform deeply

- Platform / DevOps engineers responsible for deploying, securing, and monitoring PostgreSQL on Azure

- DBAs migrating from Oracle, or on-premises PostgreSQL to Azure

Workshop Journey

Follow these 10 steps from infrastructure deployment to teardown:

| Step | Phase | What You Do |

|---|---|---|

| 1 — Deploy | Bicep → PG Flex + jumpbox + VNets, DNS, storage | Deploy the full environment or the simple server |

| 2 — Connect | SSH to jumpbox → psql, .pg_azure, .pgpass | Establish connectivity and store credentials securely |

| 3 — Load Data | pg_restore orders_demo — 4 tables, ~410K rows | Import a realistic e-commerce dataset |

| 4 — Break Workload | 6 heavy queries — CPU + IOPS + temp spills | Run intentionally unoptimised queries to generate load |

| 5 — Monitor | Azure Metrics, QPI, alerts, diagnostic settings | Observe the impact while the damage is fresh |

| 6 — Admin & Access | Roles, permissions, RBAC, PgBouncer pooling | Learn PostgreSQL access control and connection pooling |

| 7 — Protect | Backup, replication, HA/DR, security, patching | Cover business continuity and security management |

| 8 — Diagnose | DB profiling, MVCC, statistics, EXPLAIN, parameter tuning | Understand why the workload was slow |

| 9 — Fix | Index tuning lab — before → after comparison | Add the right indexes and prove the improvement |

| 10 — Clean Up | Delete resource group, remove credentials | Tear down all resources |

Day 1 — Deploy, Connect, Monitor, Administer & Protect

| Time | Session | What We’ll Do | Duration |

|---|---|---|---|

| 09:00 | Welcome & Setup | Introductions, prerequisites, Azure Cloud Shell | 30 min |

| 09:30 | Deploy | Bicep → PostgreSQL Flex + jumpbox + VNets, DNS, storage | 35 min |

| 10:05 | Connect | SSH to jumpbox, psql basics, SSH tunnels, VS Code | 40 min |

| 10:45 | ☕ Break | 15 min | |

| 11:00 | Load Data | Restore orders_demo (4 tables, ~410K rows), explore the schema | 25 min |

| 11:25 | Break the Workload | Run 6 intentionally heavy queries — CPU, IOPS, temp spills | 25 min |

| 11:50 | Q&A — Morning Recap | Questions on everything so far, troubleshoot any setup issues | 10 min |

| 12:00 | 🍽️ Lunch | 60 min | |

| 13:00 | Azure Monitoring | Portal metrics, QPI, KQL, alerts — correlate spikes to the demo queries | 35 min |

| 13:35 | Administration & Roles | Server parameters, roles, GRANT/REVOKE, INHERIT vs NOINHERIT | 50 min |

| 14:25 | ☕ Break | 15 min | |

| 14:40 | Logical Backup | pg_dump / pg_restore — formats, selective restore, verification | 25 min |

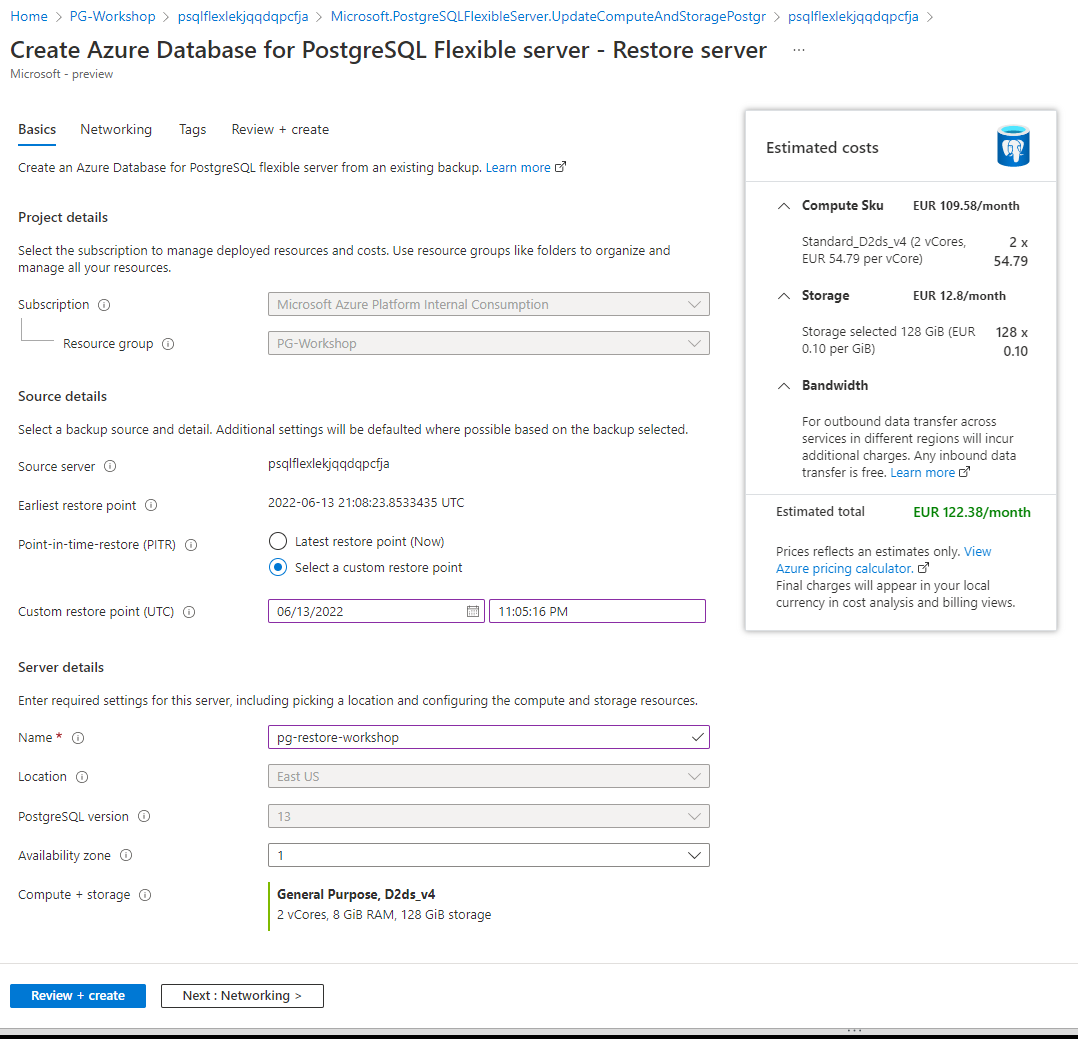

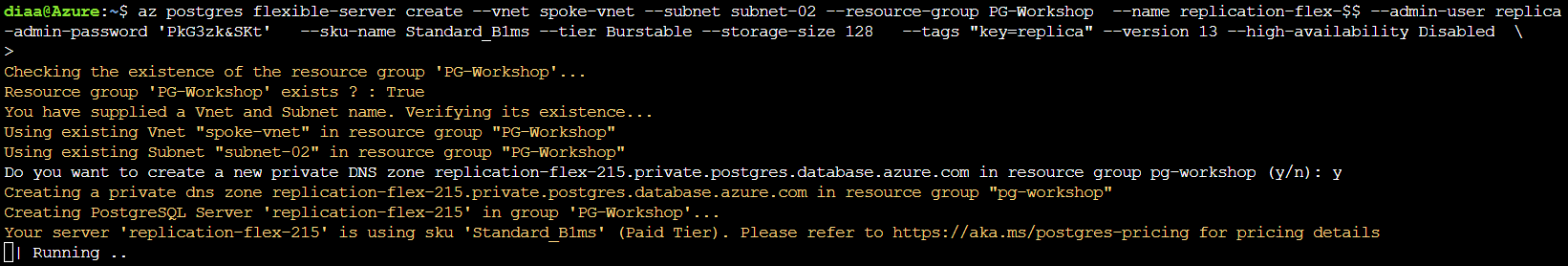

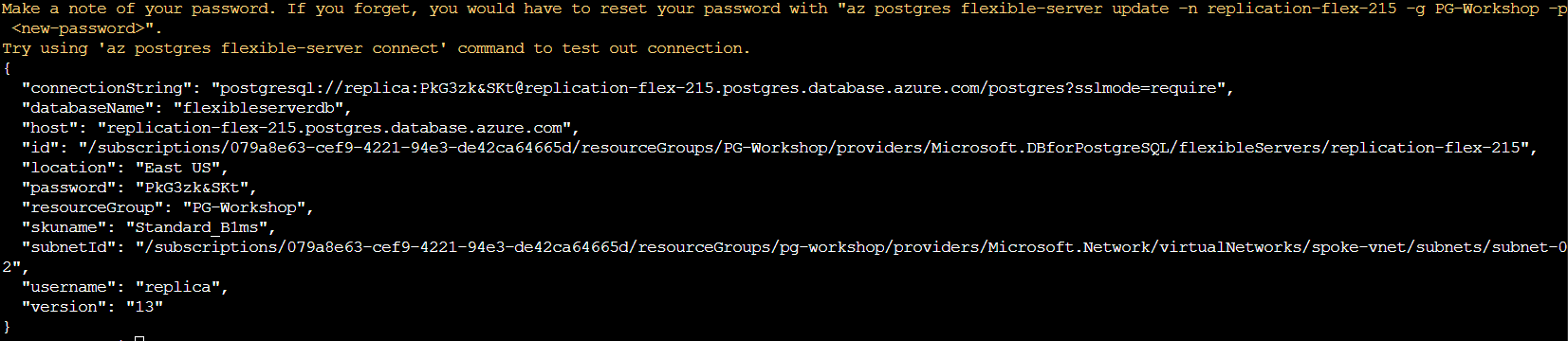

| 15:05 | Business Continuity | Physical backup & PITR, HA/DR failover, security & patching | 30 min |

| 15:35 | Q&A — Day 1 Wrap-up | Open discussion, review key concepts, preview of Day 2 | 25 min |

| 16:00 | End of Day 1 |

Day 2 — Profile, Diagnose & Fix

| Time | Session | What We’ll Do | Duration |

|---|---|---|---|

| 09:00 | Day 2 Kick-off | Quick recap of Day 1, questions from overnight | 10 min |

| 09:10 | Database Profiling | 22 diagnostic queries — health check & performance triage scripts | 50 min |

| 10:00 | ☕ Break | 15 min | |

| 10:15 | MVCC & Autovacuum | Dead tuples, VACUUM vs VACUUM FULL, bloat, autovacuum tuning | 30 min |

| 10:45 | Parameter Tuning | work_mem, shared_buffers, effective_cache_size — measure the impact | 30 min |

| 11:15 | Q&A — Internals | Pause for questions — this is the densest part of the workshop | 15 min |

| 11:30 | SQL Characteristics | Partial indexes, JSONB, GIN indexes — PostgreSQL superpowers | 20 min |

| 11:50 | Statistics & Query Planning | EXPLAIN, cost model, join algorithms — nested loop vs hash vs merge | 25 min |

| 12:15 | 🍽️ Lunch | 60 min | |

| 13:15 | Index Tuning Lab | Add 5 indexes, re-run the broken workload, measure 2–50× speedup | 45 min |

| 14:00 | ☕ Break | 15 min | |

| 14:15 | Extensions (optional) | pg_trgm, uuid-ossp, pgcrypto, pg_cron | 20 min |

| 14:35 | Clean Up | Delete resource group, verify everything is gone | 10 min |

| 14:45 | Final Q&A & Wrap-up | Open floor — ask anything! Feedback, next steps, resources | 30 min |

| 15:15 | End of Workshop |

What You Will Learn

| Theme | Topics |

|---|---|

| Deployment & Access | Bicep IaC, Azure Flexible Server provisioning, networking (VNet, private DNS, peering), connection methods (psql, SSH tunnel, VS Code), roles & permissions, connection pooling |

| Business Continuity & Security | Logical backup (pg_dump / pg_restore), physical backup & PITR, logical replication, HA/DR with zone redundancy, patching & maintenance windows, pgAudit & security management |

| Day Two Operations | Azure Monitor metrics, Query Performance Insight, database profiling (catalog views), MVCC & autovacuum, parameter tuning (work_mem, shared_buffers), EXPLAIN & statistics, index tuning, SQL features (partial indexes, JSONB), extensions |

Prerequisites

Before you begin, make sure you have the items below ready. The exact requirements depend on which deployment option you choose — see the comparison table at the bottom of this page.

Azure Subscription

You need an Azure subscription with Contributor access.

- Sign in at https://portal.azure.com

- Authenticate the Azure CLI:

az login

Required Tools

| Tool | Download | Required for |

|---|---|---|

| Azure CLI | Install Azure CLI | All options |

| SSH client | Built into Windows 10+, macOS, Linux | Option 1 (Enterprise) |

| psql (PostgreSQL client) | Pre-installed on jumpbox VM (Option 1), or install locally via PostgreSQL downloads | All options |

| VS Code + PostgreSQL extension (optional) | Download VS Code + install PostgreSQL extension | Option 2, Option 3 (or via SSH tunnel with Option 1) |

Shell Environment

Your choice of shell depends on your deployment option:

- Option 1 (Enterprise): Use Azure Cloud Shell (Bash) to deploy. After deployment, you SSH into the jumpbox Linux VM which has

psql,pg_dump,pg_restore, and other PostgreSQL 18 utilities pre-installed. All database work happens on the jumpbox. - Option 2 (Simple) / Option 3 (BYOS): Use any terminal — Azure Cloud Shell, PowerShell, Bash, or Windows Terminal. You connect to PostgreSQL directly from your machine, so you need

psqlinstalled locally (or use the VS Code PostgreSQL extension or the Azure Portal Connect blade).

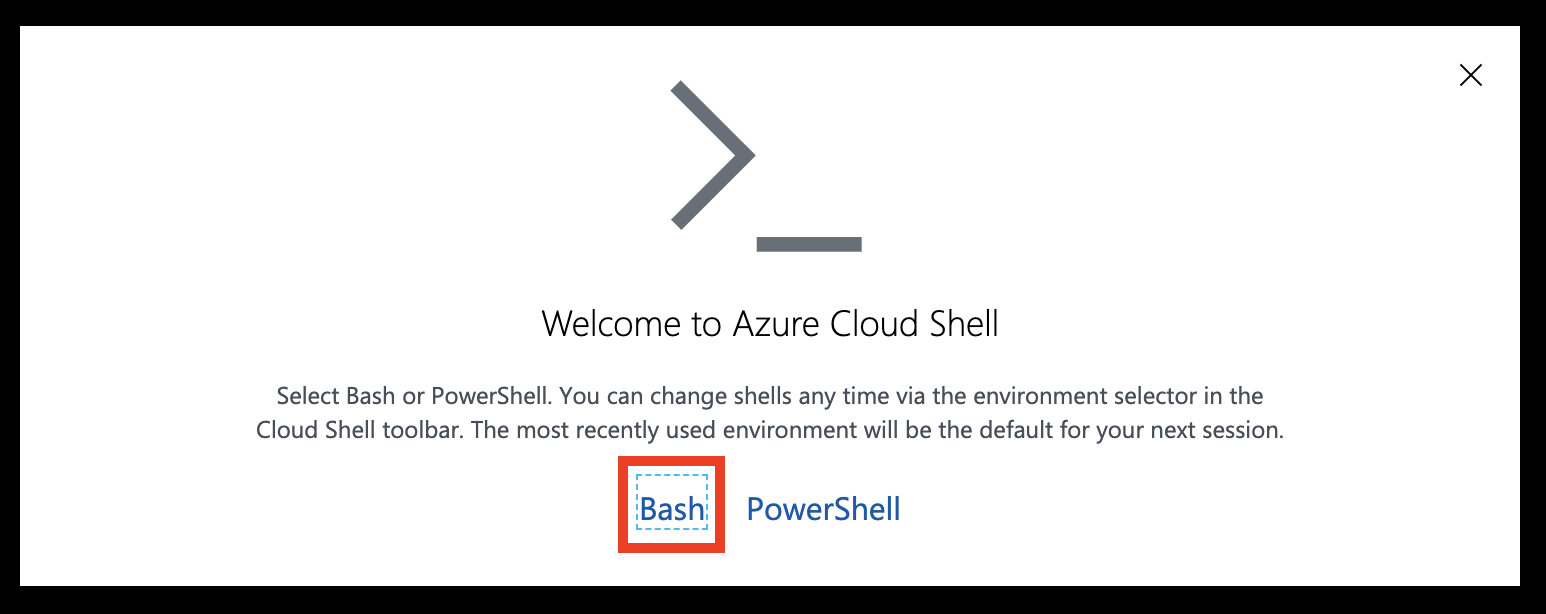

Head over to https://shell.azure.com and sign in.

Select Bash as your shell.

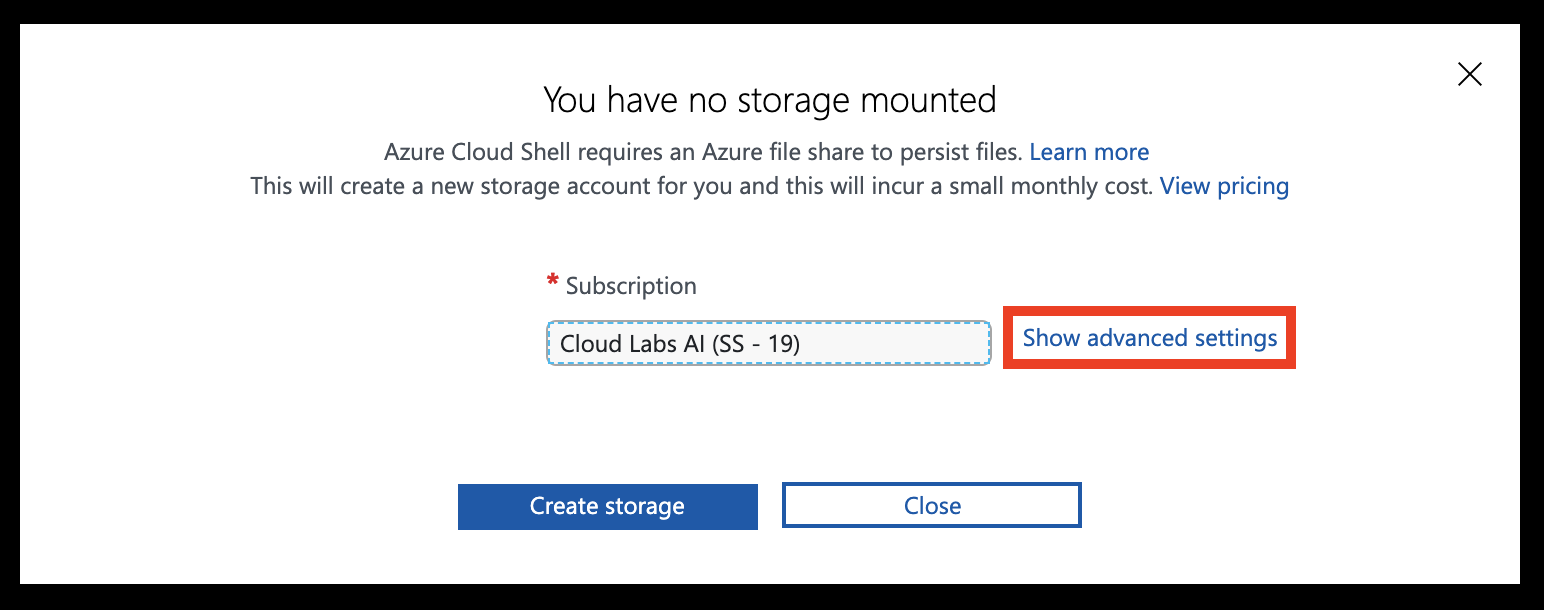

Select Show advanced settings

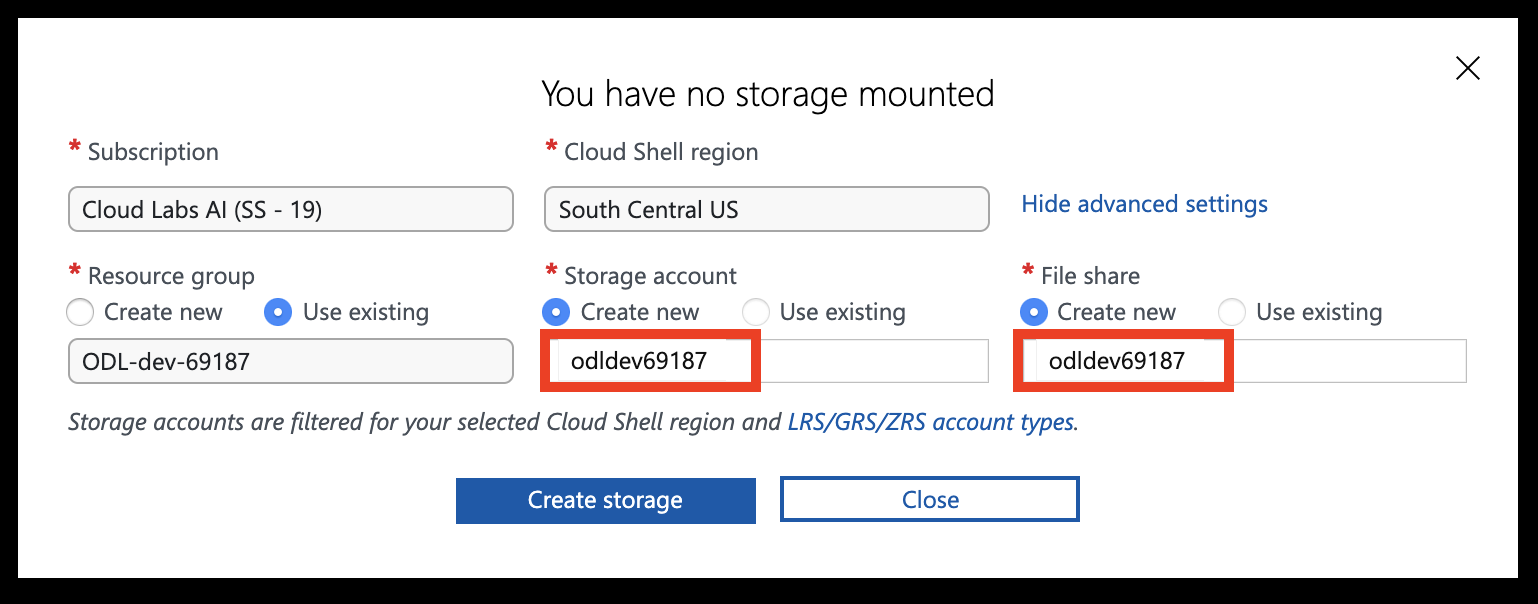

Set the Storage account and File share names to your resource group name (all lowercase, without special characters), then click Create storage.

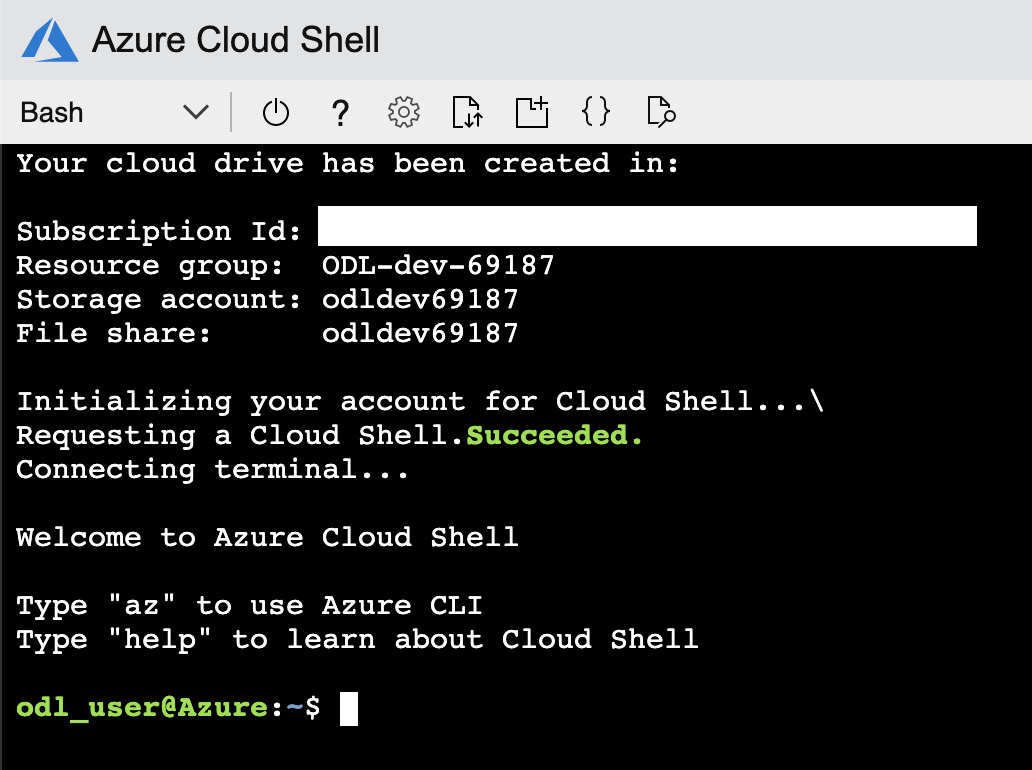

You should now have access to the Azure Cloud Shell.

Deployment Options

The workshop provides three deployment options. Choose the one that best fits your scenario.

| Option 1 — Enterprise | Option 2 — Simple | Option 3 — BYOS | |

|---|---|---|---|

| What’s deployed | Hub-spoke VNet, jumpbox VM, PG Flex (private access), private DNS | PG Flex (public access) + firewall rule | Nothing — you bring your own server |

| How you connect | SSH → jumpbox → psql (private network) |

Direct from your machine (psql, VS Code, Portal) |

Direct or via jumpbox |

| SSH tunnel needed? | Yes — for GUI tools (VS Code, pgAdmin) on your laptop | No | Depends on your setup |

| Cloud Shell required? | Recommended for deployment | No — any terminal works | No |

| Best for | Enterprise private networking scenarios | Quick start, developer-focused | Instructor-led or pre-provisioned |

Option 1 — Enterprise Deployment (Recommended)

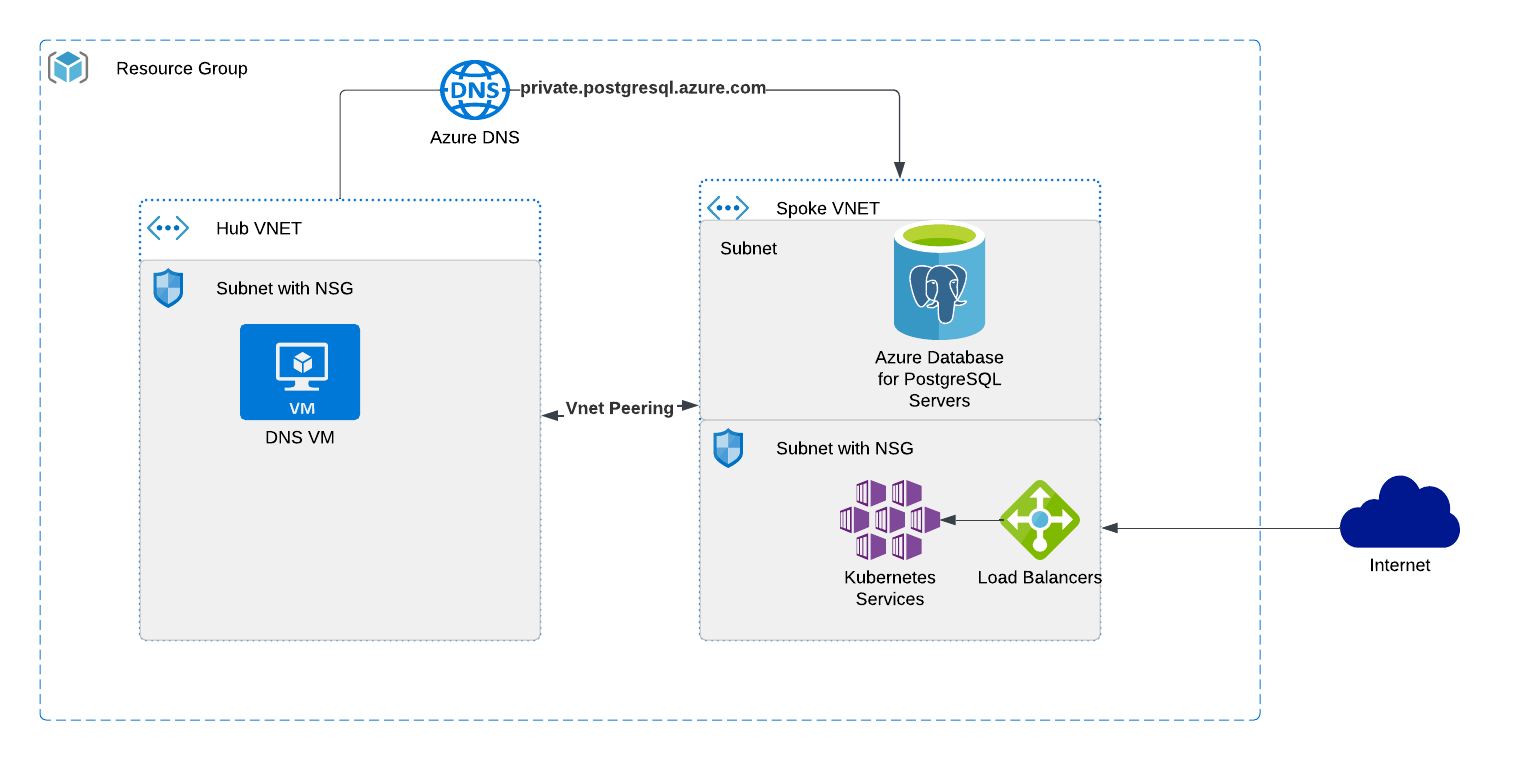

Deploy the complete hub-and-spoke architecture using Bicep. This is the default path used throughout the workshop.

What gets deployed:

- Hub VNet with a jumpbox Linux VM (Rocky Linux 9, PostgreSQL 18 client pre-installed)

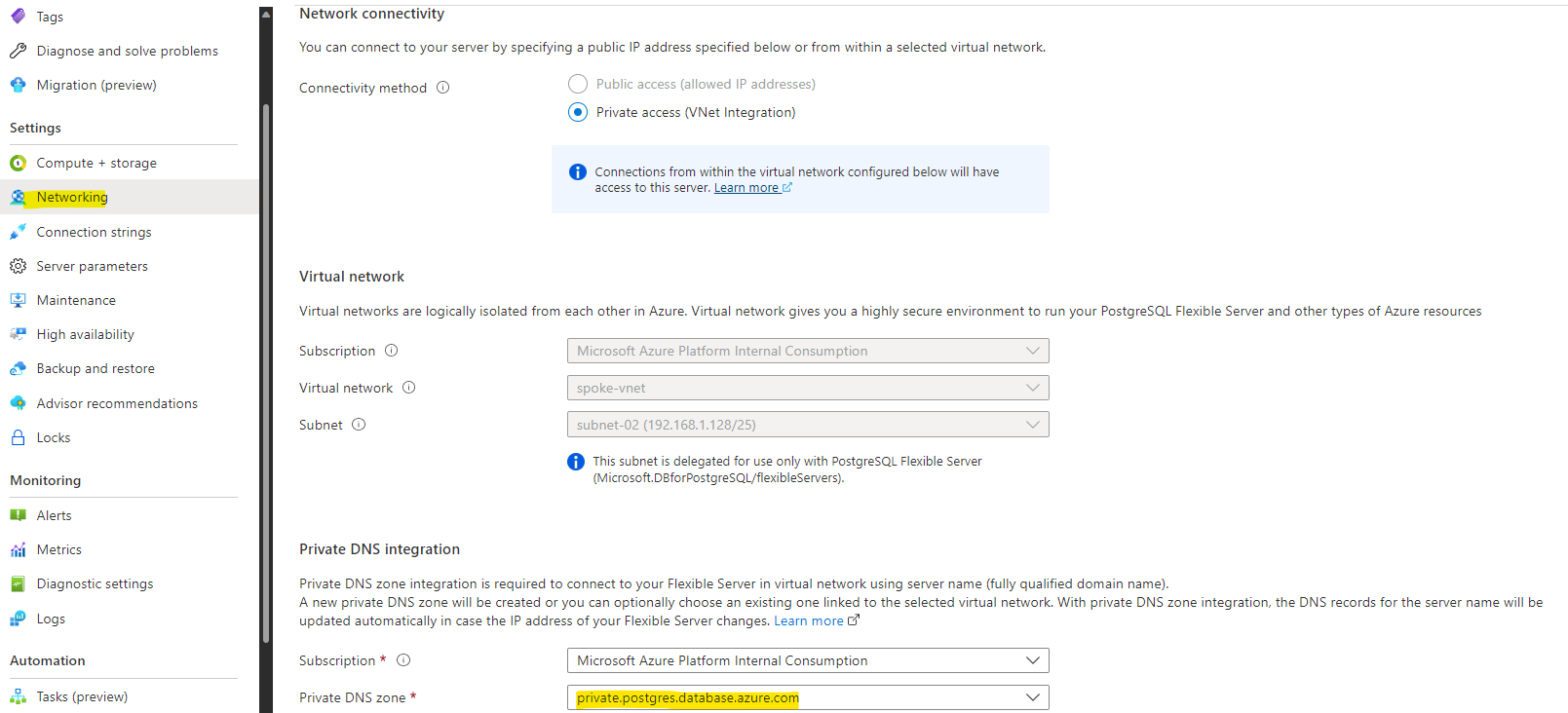

- Spoke VNet with Azure Database for PostgreSQL Flexible Server (private access via delegated subnet)

- VNet peering between hub and spoke

- Private DNS zone (

private.postgres.database.azure.com) - NSG with SSH access

- Storage account (for diagnostic logs)

How to connect:

- SSH into the jumpbox VM from Cloud Shell or your local terminal

- Use

psqldirectly on the jumpbox to reach PostgreSQL over the private network - (Optional) Set up an SSH tunnel to use GUI tools (VS Code PostgreSQL extension, pgAdmin) from your laptop — see Connecting to PostgreSQL for details

Best for: Enterprise-like scenarios with private networking, DNS resolution, and a jump-box access pattern.

Option 2 — Simple Deployment (Public Access)

Deploy only a PostgreSQL Flexible Server with public network access and a firewall rule for your IP. No VNet, no jumpbox, no DNS.

What gets deployed:

- Azure Database for PostgreSQL Flexible Server (public access, firewall whitelist)

How to connect: Directly from your machine using:

psql(install locally or use Cloud Shell)- VS Code PostgreSQL extension

- Azure Portal → PostgreSQL server → Connect blade

No SSH tunnel needed.

Best for: Quick start, simple labs, or when you already have a PostgreSQL client installed and want minimal setup.

See the Deploy with Bicep section for step-by-step instructions.

Option 3 — Bring Your Own Server

If you already have an Azure Database for PostgreSQL Flexible Server (or are attending an instructor-led session where infrastructure is pre-provisioned), skip the deployment section entirely and proceed to Connecting to PostgreSQL.

You will need:

- PostgreSQL server FQDN

- Admin username and password

- Jumpbox VM IP address (if applicable) and SSH credentials

- Network access (either public endpoint with your IP whitelisted, or SSH to the jumpbox)

Tips for Azure Cloud Shell

- Use

code <filename>to open the built-in text editor - Drag and drop files to upload them

- Use

curl -o filename.ext https://url/filename.extto download files directly

Next: Once your prerequisites are ready, head to Deploy Azure Database for PostgreSQL with Bicep to provision your environment.

Getting up and running

Deploy Azure Database for PostgreSQL with Bicep

In this section you will deploy the workshop environment using Bicep. Choose the option that matches your scenario.

Prerequisites

- Windows 10/11 with PowerShell, macOS, or Linux (or use Azure Cloud Shell)

- Azure CLI installed

- An Azure subscription with Contributor access

Option 1 — Enterprise Deployment

Deploys the full hub-and-spoke architecture: jumpbox VM, private VNet, PostgreSQL Flexible Server with private access, and a private DNS zone.

Step 1 — Download the Bicep templates

Open Azure Cloud Shell (Bash), or a local terminal with Azure CLI installed, and download the templates:

curl -O https://pg.azure-workshops.cloud/scripts/bicep.zip

unzip bicep.zip

cd bicep

PowerShell? Use

Invoke-WebRequestinstead:Invoke-WebRequest -Uri https://pg.azure-workshops.cloud/scripts/bicep.zip -OutFile bicep.zip Expand-Archive bicep.zip -DestinationPath . cd bicep

Step 2 — Log in to Azure

az login

This opens a browser window. Sign in with your Azure account.

Verify you are on the correct subscription:

az account show --query "{name:name, id:id, state:state}" -o table

If the subscription shown is not the one you intend to use, list all available subscriptions and set the correct one:

az account list --query "[].{Name:name, ID:id, Default:isDefault}" -o table

az account set --subscription "<subscription-name-or-id>"

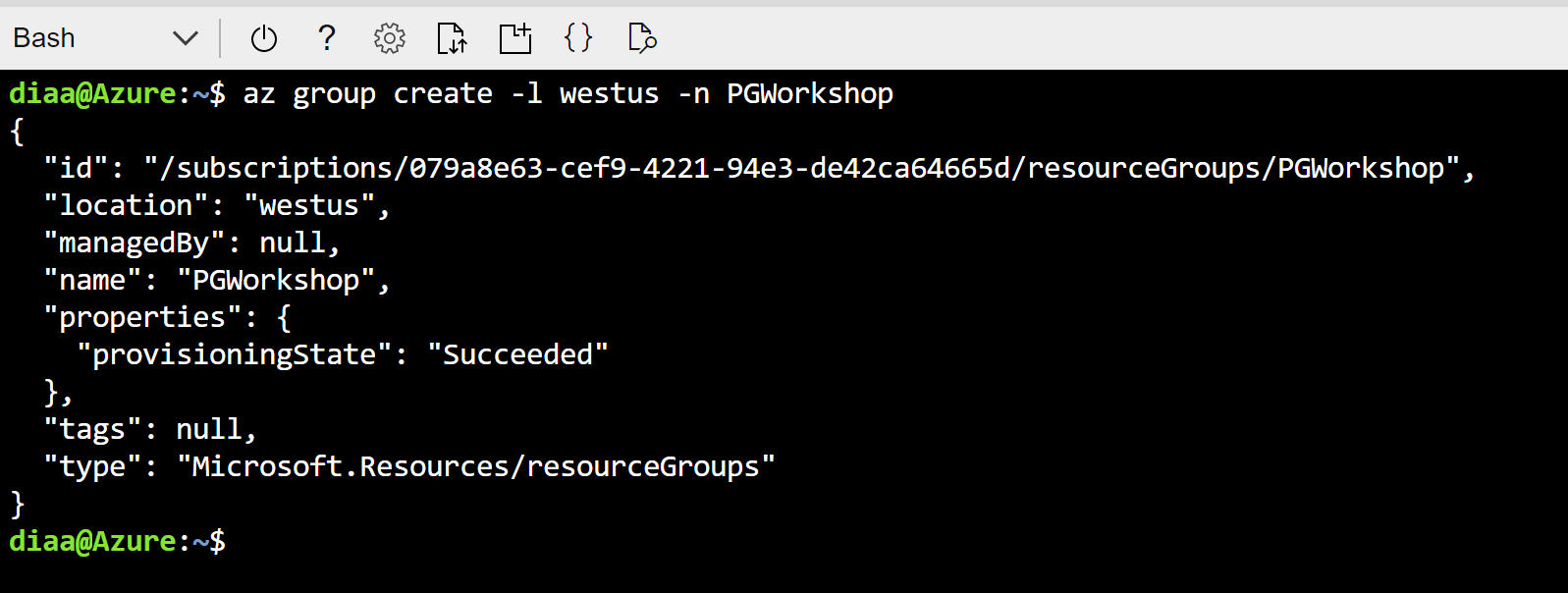

Step 3 — Create the resource group

az group create --name PG-Workshop --location uksouth

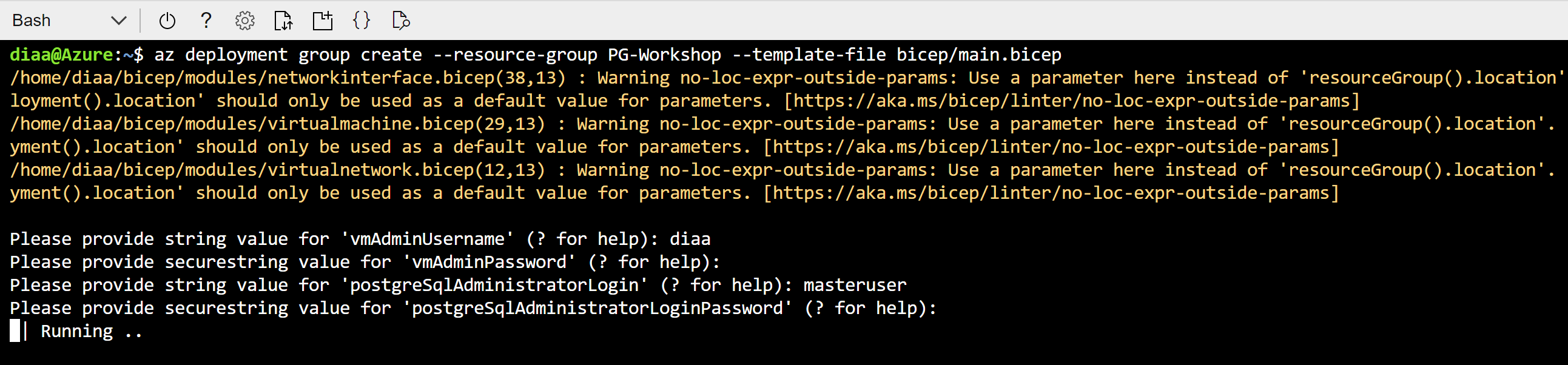

Step 4 — Deploy the Bicep template

az deployment group create --resource-group PG-Workshop --template-file main.bicep

You will be prompted for four values:

| Parameter | Description |

|---|---|

vmAdminUsername |

Username for the jumpbox VM (e.g. workshopuser) |

vmAdminPassword |

A strong password for the jumpbox VM |

postgreSqlAdministratorLogin |

Username for PostgreSQL — use your name, avoid admin or root |

postgreSqlAdministratorLoginPassword |

A strong password (min 8 chars, mix of upper/lower/number/special) |

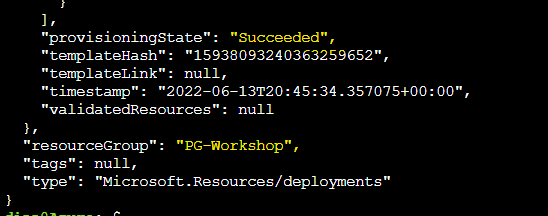

The deployment takes approximately 10–15 minutes. When it finishes, note the outputs — they contain the jumpbox public IP and the PostgreSQL FQDN.

Step 5 — Verify resources and collect connection details

Go to Resource Groups in the Azure Portal and click on PG-Workshop. You should see:

- Jumpbox VM (

jumpbox) - PostgreSQL Flexible Server

- Hub and Spoke virtual networks

- Private DNS zone

- Network security group

- Storage account

Keep these values accessible — you will use them in every subsequent section:

| Value | Where to find it |

|---|---|

| Jumpbox VM public IP | Azure Portal → PG-Workshop → jumpbox VM → Overview → Public IP address |

| PostgreSQL FQDN | Azure Portal → PG-Workshop → PostgreSQL Flexible Server → Overview → Server name |

| VM admin username | The vmAdminUsername you entered in Step 4 |

| PostgreSQL admin username | The postgreSqlAdministratorLogin you entered in Step 4 |

Step 6 — SSH into the jumpbox and verify connectivity

ssh <vmAdminUsername>@<jumpbox-public-ip>

Use the password you provided in Step 4. Once connected, verify the PostgreSQL client is installed:

psql --version

You should see psql (PostgreSQL) 18.x. Then test connectivity to the database:

psql -h <postgresql-fqdn> -U <pgAdminUsername> -d postgres

Enter the PostgreSQL password when prompted. If you see the postgres=> prompt, the deployment is working correctly.

Check the PostgreSQL server version:

SELECT version();

You should see output containing PostgreSQL 18.x. To exit psql:

\q

Next: Proceed to Connecting to PostgreSQL for detailed connection methods, SSH tunnels, and VS Code setup.

Option 2 — Simple Deployment (Public Network)

Deploys only a PostgreSQL Flexible Server with a public endpoint and a firewall rule for your IP. No jumpbox VM is created — you connect directly from your machine.

Step 1 — Download templates and navigate to the simple folder

If you haven’t already downloaded the templates, do so now (see Option 1 — Step 1). Then navigate to the simple folder:

cd bicep/simple

Step 2 — Log in and create the resource group

Skip this step if you already logged in and created the resource group above.

az login

az group create --name PG-Workshop --location uksouth

Step 3 — Get your public IP

On PowerShell (Windows):

(Invoke-WebRequest -Uri "https://ifconfig.me/ip").Content

On Bash (Linux/macOS/Cloud Shell):

curl -s ifconfig.me

Note the IP address returned.

Step 4 — Deploy

az deployment group create --resource-group PG-Workshop \

--template-file main.bicep \

--parameters clientIPAddress="<your-public-ip>"

You will be prompted for:

| Parameter | Description |

|---|---|

administratorLogin |

Username for PostgreSQL (e.g. pgadmin) |

administratorPassword |

A strong password (min 12 chars, mix of upper/lower/number/special) |

Step 5 — Connect from your local machine

The deployment output includes a ready-to-use psqlCommand. If you have psql installed locally:

psql "host=<server-fqdn> user=<administratorLogin> dbname=postgres sslmode=require"

If you do not have psql installed, you can connect from the Azure Portal: go to your PostgreSQL server → Connect blade.

Step 6 — Verify resources and collect connection details

Go to Resource Groups in the Azure Portal and click on PG-Workshop. You should see:

- PostgreSQL Flexible Server

- Firewall rule with your IP address

Keep these values accessible — you will use them in every subsequent section:

| Value | Where to find it |

|---|---|

| PostgreSQL FQDN | Azure Portal → PG-Workshop → PostgreSQL Flexible Server → Overview → Server name |

| PostgreSQL admin username | The administratorLogin you entered in Step 4 |

Once connected via psql, check the PostgreSQL server version:

SELECT version();

You should see output containing PostgreSQL 18.x. To exit psql:

\q

Next: Proceed to Connecting to PostgreSQL for detailed connection methods and VS Code setup.

Connecting to PostgreSQL

This section covers how to connect to your Azure Database for PostgreSQL Flexible Server, set up convenient environment variables, and store credentials securely. Since the database is deployed inside a VNet with private access, you need to go through the jumpbox VM to reach it.

You will need the following values from your deployment output:

- Jumpbox Public IP (

vmPublicIp) - PostgreSQL FQDN (

postgreSqlFqdn) - PostgreSQL Admin Username (

postgreSqlUsername) - VM Admin Username (

vmUsername)

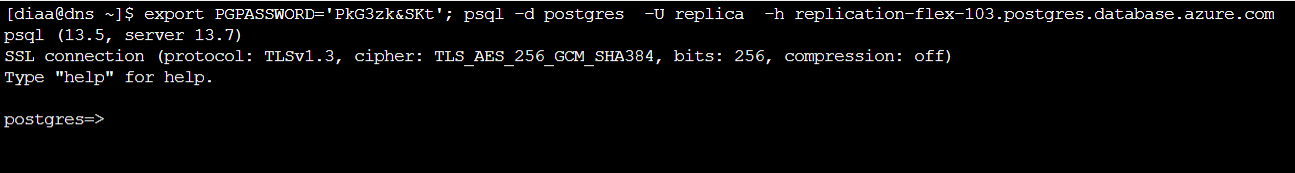

Method 1: SSH into the Jumpbox and use psql

The simplest approach — SSH into the jumpbox and connect directly.

# SSH into the jumpbox

ssh <vmUsername>@<jumpbox-ip>

Once on the jumpbox, connect to PostgreSQL:

psql -h <postgresql-fqdn> -U <pgadmin> -d postgres

You should see the PostgreSQL prompt. Verify with:

SELECT version();

Tip: After verifying your connection works, set up environment variables and

.pgpass(see the Connection Best Practices section below) so you never have to type the full connection string again.

Method 2: SSH Tunnel (connect from your local machine)

Create an SSH tunnel to forward a local port through the jumpbox to the PostgreSQL server.

Step 1: Create the tunnel

Open a terminal on your local machine:

ssh -v -fN -L 5433:<postgresql-fqdn>:5432 <vmUsername>@<jumpbox-ip>

Flags explained:

-v— verbose output so you can see the tunnel being established-f— run in background after authentication-N— don’t execute a remote command (tunnel only)-L 5433:<fqdn>:5432— forward local port 5433 to the remote PostgreSQL port 5432

Step 2: Verify the tunnel is open

On Windows (PowerShell):

netstat -an | findstr 5433

On Linux/macOS:

lsof -i :5433

You should see the port in LISTEN state.

Step 3: Connect through the tunnel

psql -h localhost -p 5433 -U <pgadmin> -d postgres

To close the tunnel:

# Find the SSH process

ps aux | grep "ssh -.*5433"

# Kill it

kill <pid>

On Windows:

Get-Process ssh | Where-Object { $_.CommandLine -match "5433" } | Stop-Process

Method 3: VS Code PostgreSQL Extension

The VS Code PostgreSQL extension lets you run queries directly from the editor.

Step 1: Set up the SSH tunnel

You need the tunnel from Method 2 running first. Open a terminal and run:

ssh -fN -L 5433:<postgresql-fqdn>:5432 <vmUsername>@<jumpbox-ip>

Step 2: Install the PostgreSQL extension

- Open VS Code

- Go to Extensions (

Ctrl+Shift+X) - Search for PostgreSQL (by Microsoft)

- Click Install

Step 3: Create a new connection

- Click the PostgreSQL icon in the left sidebar

- Click + Create Connection Profile

- Fill in:

- Server name:

localhost - Port:

5433 - Database name:

postgres - Authentication type: Password

- User name: your PostgreSQL admin username

- Password: your PostgreSQL admin password

- Connection name: give it a friendly name (e.g.,

Workshop DB)

- Server name:

- Click Connect

Step 4: Run queries

- Right-click your connection and select New Query

- Type your SQL:

SELECT version();

- Press

Ctrl+Shift+Eor click Run to execute

You can also browse tables, views, and functions in the sidebar tree.

Method 4: VS Code PostgreSQL Extension (Simple Deployment)

If you deployed using bicep/simple/main.bicep, the server has public access enabled with a firewall rule for your IP. No SSH tunnel needed.

- Open the PostgreSQL sidebar in VS Code

- Click + Create Connection Profile

- Fill in:

- Server name:

<postgresql-fqdn>(directly, no tunnel) - Port:

5432 - Database name:

postgres - User name: your admin username

- Password: your admin password

- Server name:

- Connect and query directly

Connection Best Practices

Once you have verified your connection works using one of the methods above, set up environment variables and a .pgpass file on the jumpbox. This avoids typing connection parameters repeatedly and keeps your password out of shell history.

Step 1 — Set libpq Environment Variables

libpq environment variables are recognized by psql, pg_dump, pg_restore, and all PostgreSQL client tools.

Create a file on the jumpbox:

cat > ~/.pg_azure << 'EOF'

export PGHOST=<postgresql-fqdn>

export PGUSER=<pgadmin>

export PGDATABASE=orders_demo

export PGSSLMODE=require

EOF

Or download the template and edit it:

curl -o ~/.pg_azure https://pg.azure-workshops.cloud/scripts/pg_azure

Then update the values in ~/.pg_azure with your actual hostname and username.

Source it in your current session:

source ~/.pg_azure

Tip: Add

source ~/.pg_azureto your~/.bashrcso the variables are set automatically on every login.

Notice that PGPASSWORD is intentionally omitted. Exporting your password as an environment variable is insecure — it is visible in /proc/<pid>/environ, in shell history, and to any process running as the same user. Use .pgpass instead.

Step 2 — Store Passwords Securely with .pgpass

The ~/.pgpass file provides password lookup for libpq clients without exposing the password in the environment. The format is:

hostname:port:database:username:password

Create the file:

echo "<postgresql-fqdn>:5432:*:<pgadmin>:<your-password>" > ~/.pgpass

chmod 600 ~/.pgpass

The * in the database field means this password applies to all databases on that server. The chmod 600 is required — PostgreSQL ignores .pgpass if the permissions are too open.

Now connect without any parameters:

psql

You should connect directly to orders_demo (from PGDATABASE) on the Flexible Server (from PGHOST) with no password prompt.

Step 3 — Getting the Connection String from the Azure Portal

The Azure Portal provides ready-made connection strings for various languages and tools.

- Go to your PostgreSQL Flexible Server in the Azure Portal

- In the left menu, click Connection strings

- Copy the psql connection string

This is useful as a reference — but for day-to-day work during the workshop, the environment variables + .pgpass approach above is faster and more secure.

Troubleshooting

SSH tunnel fails to connect:

- Verify the jumpbox public IP is correct

- Check that NSG allows SSH (port 22) from your IP

- Ensure the jumpbox VM is running:

az vm show -g apg-workshop-rg -n jumpbox --query powerState

psql: could not connect to server:

- Verify the tunnel is active:

netstat -an | findstr 5433 - Check that you are using the correct port (

5433for tunnel,5432for direct) - Verify the PostgreSQL FQDN resolves on the jumpbox:

nslookup <postgresql-fqdn>

VS Code connection timeout:

- Make sure the SSH tunnel is running before creating the connection

- Check that the port matches (5433 for tunnel)

- Try disconnecting and reconnecting the profile

DNS resolution fails on the jumpbox:

- Verify BIND is running:

systemctl status named - Test resolution:

dig <postgresql-fqdn> - If BIND is not running, restart it:

sudo systemctl restart named

.pgpass not working:

- Check permissions:

ls -la ~/.pgpass— must be-rw-------(600) - Verify the hostname matches exactly (including

.postgres.database.azure.com) - Ensure no trailing whitespace in the file:

cat -A ~/.pgpass

psql: The PostgreSQL Command-Line Client

psql is the interactive terminal for PostgreSQL. You will use it throughout this workshop to run queries, explore schemas, import data, and administer the server. This section teaches you the essential skills for working with psql efficiently.

Prerequisite: You should have connected to PostgreSQL from the jumpbox in the previous section. If your environment variables and

.pgpassare set up, simply runpsqlto connect.

Code block conventions used throughout this workshop:

- ` ```sh ` — run in the Linux shell on the jumpbox

- ` ```sql ` — SQL sent to the PostgreSQL server (type inside psql)

- ` ```psql ` — psql meta-commands (type inside psql, processed by the client — not sent to the server)

Backslash Meta-Commands

Anything you type in psql that begins with a backslash (\) is a meta-command — it is processed by the psql client itself, not sent to the PostgreSQL server.

Note: The meta-commands for exploring databases, schemas, tables, indexes, and roles (

\l,\dt,\dn,\du,\d, etc.) are covered step-by-step in the Load Data section, where you will use them hands-on against real data. This section focuses on thepsqlsession skills you need to work efficiently.

\conninfo — Always Verify Your Connection First

Before running any commands, confirm that you are connected to the right server, database, and user:

\conninfo

Example output:

You are connected to database "postgres" as user "pgadmin" on host "myserver.postgres.database.azure.com" at port "5432".

SSL connection (protocol: TLSv1.3, cipher: TLS_AES_256_GCM_SHA384, bits: 256, compression: off)

What each field tells you:

| Field | Why it matters |

|---|---|

| database | Confirms you are on postgres (default) or orders_demo (after restore) — a wrong database is the #1 cause of “table not found” errors |

| user | Confirms the role you connected as — important for privilege troubleshooting |

| host | Full FQDN of the Flexible Server — confirms you are not on localhost by mistake |

| port | Should be 5432 (direct) or 6432 if you are going through PgBouncer |

| SSL | Confirms the connection is encrypted — Azure Flexible Server enforces TLS by default |

Run \conninfo any time you are unsure which server or database your session is on.

Display Modes

By default, psql displays query results as a horizontal table. This becomes unreadable when tables have many columns.

Toggle expanded (vertical) display:

\x auto

With \x auto, psql automatically switches to vertical display when the output is too wide for your terminal. Try it:

SELECT * FROM pg_stat_activity;

Without \x auto, this is a wall of text. With it, each row is displayed vertically.

Toggle query timing:

\timing

This shows how long each query takes. Enable it now — you will want it for every query in the workshop.

Getting Help

\? -- list all backslash meta-commands

\h -- list all SQL commands

\h CREATE TABLE -- show syntax help for a specific SQL command

Watching a Query

\watch re-runs the last query at a set interval — useful for monitoring:

SELECT count(*) FROM pg_stat_activity;

\watch 2

This re-runs the query every 2 seconds. Press Ctrl+C to stop.

Command History

\s -- print command history

Use Ctrl+R to search history interactively — type part of a previous command and psql will find it.

Inspecting Functions

You can view the source of any function:

\sf abs(bigint)

This prints the CREATE FUNCTION definition — useful for understanding built-in or custom functions.

Running SQL from a File

Instead of pasting SQL into psql, you can run a file:

\i /path/to/script.sql

Or from the command line:

psql -f /path/to/script.sql

This is how you will restore database dumps and run batch scripts later in the workshop.

Running a single command from the shell

If .pgpass and .pg_azure are set up, you can run one-off SQL directly from the shell with -c:

psql -c "CREATE DATABASE orders_demo;"

You will use this in the next section to create the workshop database before loading data.

Quick Reference

| Command | Purpose |

|---|---|

\conninfo |

Show current connection (host, port, user, database, SSL) |

\x auto |

Auto-toggle vertical display for wide results |

\timing |

Toggle query execution time display |

\watch N |

Re-run last query every N seconds |

\s |

Print command history |

\sf <func> |

Show function definition |

\i file |

Run SQL from a file |

\? |

List all backslash meta-commands |

\h <cmd> |

SQL syntax help for a command |

\q |

Quit psql |

Navigation commands (

\l,\dt,\dn,\d <table>,\du, etc.) are covered hands-on in the Load Data section.

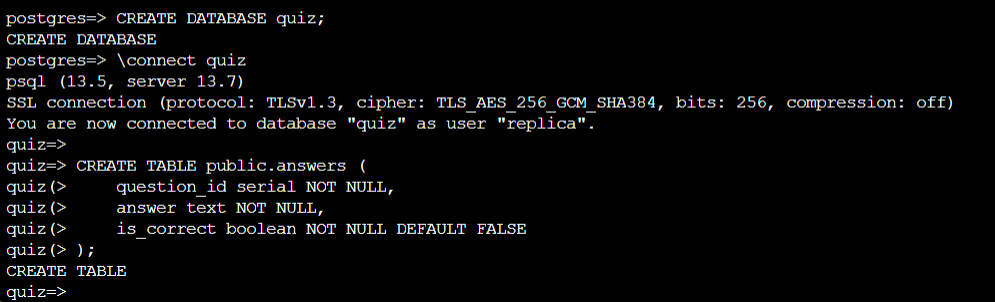

Load Data — Restore the Sample Database

In this section you will restore a sample e-commerce database (orders_demo) with four tables and ~410K rows, then explore its schema to understand the data you will work with throughout the rest of the workshop.

Step 1 — SSH into the Jumpbox

Connect to the jumpbox VM using the public IP from your deployment output:

ssh <vmUsername>@<jumpbox-public-ip>

Replace

<vmUsername>and<jumpbox-public-ip>with the values from your Bicep deployment output.

Step 2 — Connect to PostgreSQL with psql

From the jumpbox, connect to the default postgres database:

psql -h <postgresql-fqdn> -U <pgadmin> -d postgres

You should see the postgres=> prompt.

Step 3 — Explore the Server Before Loading Data

Before restoring any data, run the following meta-commands to understand what is already on the server. These are psql backslash commands — they are not SQL; they are interpreted by the psql client itself.

psql session skills (

\timing,\x,\watch,\conninfo,\i,\?) are covered in the previous psql: The PostgreSQL Command-Line Client section. This step focuses on navigation commands that explore what is on the server.

Tip: Want to see the SQL behind any

\command? Run\set ECHO_HIDDEN onin psql — it will print the underlying query before each result. This is useful for learning and for reproducing meta-commands in other SQL clients like VS Code.

3.1 — List all databases

\l

What it does: Lists every database in the PostgreSQL cluster, including the owner, encoding, collation, and access privileges. You will see the default databases (postgres, azure_maintenance, azure_sys). After the restore in Step 4 you will see orders_demo here as well.

3.2 — List schemas

\dn

What it does: Lists all schemas in the current database. By default you will see public. Schemas are namespaces that let you organise tables, views, and functions within a single database.

3.3 — List all tables in all schemas

\dt *.*

What it does: The wildcard pattern *.* means “every table in every schema.” This shows you system catalog tables (in pg_catalog and information_schema) plus any user tables in public. Use this to get a quick inventory of what exists.

3.4 — List all views in all schemas

\dv *.*

What it does: Same idea as \dt but for views. Views are stored queries that act like virtual tables. You will see many built-in system views in pg_catalog and information_schema.

3.5 — List all indexes in all schemas

\di *.*

What it does: Lists every index across all schemas. Indexes speed up queries by providing fast lookup paths. Notice which tables have indexes and which do not — this will be relevant when you run the heavy queries in the next section.

3.6 — Additional useful meta-commands

| Command | Description |

|---|---|

\l+ |

Databases with sizes and tablespace info |

\dt+ |

Tables with sizes (current database, public schema) |

\d <table> |

Describe a specific table — columns, types, constraints |

\df *.* |

List all functions in all schemas |

\du |

List all roles / users |

\conninfo |

Show current connection info (host, port, user, database) |

\timing |

Toggle query execution timing on/off — very useful for benchmarks |

\x |

Toggle expanded (vertical) display for wide result sets |

Tip: Run

\timingnow so that every query you run from this point forward shows how long it took.

Step 4 — Download and Restore the Sample Database

4.1 — Exit psql

\q

4.2 — Download the dump file

The workshop uses a pre-built custom-format dump that contains four tables with realistic e-commerce data (~410K rows total).

curl -L -O "https://pg.azure-workshops.cloud/database/orders_demo.dump"

Verify the file downloaded correctly:

ls -lh orders_demo.dump

You should see a file of roughly 5–10 MB. If the file is missing or 0 bytes, check the URL and try again.

4.3 — Create the target database

psql -h <postgresql-fqdn> -U <pgadmin> -d postgres -c "CREATE DATABASE orders_demo;"

Tip: If you configured

.pg_azureand.pgpassin the previous section, you can omit-hand-Uand you won’t be prompted for a password:psql -c "CREATE DATABASE orders_demo;"

4.4 — Restore the dump with pg_restore

pg_restore -h <postgresql-fqdn> -U <pgadmin> -d orders_demo --no-owner --no-privileges --verbose orders_demo.dump

With

.pg_azureand.pgpassconfigured:pg_restore -d orders_demo --no-owner --no-privileges --verbose orders_demo.dump

Flag reference:

--no-owner— skip ownership assignment (avoids errors when the original owner doesn’t exist on this server)--no-privileges— skip privilege (GRANT/REVOKE) statements from the source--verbose— print progress as each object is restored

You should see output listing each table and index being created, followed by data loading via COPY. If you see errors about roles not existing, they are safe to ignore (that’s what --no-owner handles).

4.5 — Verify the restore succeeded

Connect to the new database and confirm the tables exist with data:

psql -h <postgresql-fqdn> -U <pgadmin> -d orders_demo -c "

SELECT 'customers' AS tbl, COUNT(*) FROM customers

UNION ALL SELECT 'products', COUNT(*) FROM products

UNION ALL SELECT 'orders', COUNT(*) FROM orders

UNION ALL SELECT 'order_items', COUNT(*) FROM order_items;"

Expected output:

tbl | count

------------+--------

customers | 10000

products | 500

orders | 100000

order_items| 300000

If you see all four tables with the expected row counts, the restore was successful.

Step 5 — Explore the Restored Database

Connect to the new database interactively:

psql -h <postgresql-fqdn> -U <pgadmin> -d orders_demo

5.1 — Confirm the tables exist

\dt

You should see four tables:

| Table | Description |

|---|---|

customers |

10,000 customer profiles with city, country, loyalty points |

products |

500 products across 5 categories |

orders |

100,000 orders over the past year with status and shipping info |

order_items |

300,000 line items linking orders to products |

5.2 — Inspect table structures

\d customers

\d products

\d orders

\d order_items

Pay attention to data types, primary keys, and whether any foreign key constraints exist.

5.3 — Check row counts

SELECT 'customers' AS table_name, COUNT(*) AS rows FROM customers

UNION ALL

SELECT 'products', COUNT(*) FROM products

UNION ALL

SELECT 'orders', COUNT(*) FROM orders

UNION ALL

SELECT 'order_items', COUNT(*) FROM order_items;

5.4 — Check table sizes

SELECT relname AS table_name,

pg_size_pretty(pg_total_relation_size(oid)) AS total_size,

pg_size_pretty(pg_relation_size(oid)) AS table_size,

pg_size_pretty(pg_indexes_size(oid)) AS index_size

FROM pg_class

WHERE relname IN ('customers','products','orders','order_items')

ORDER BY pg_total_relation_size(oid) DESC;

5.5 — List indexes

\di

Note: You will see only primary-key indexes. There are no additional indexes on foreign keys or commonly queried columns — this is intentional. The demo queries in the next section rely on sequential scans to generate CPU load.

5.6 — Explore sample data

-- Peek at customers

SELECT * FROM customers LIMIT 5;

-- Product categories

SELECT category, COUNT(*) AS count FROM products GROUP BY category ORDER BY count DESC;

-- Order status distribution

SELECT status, COUNT(*) AS count FROM orders GROUP BY status ORDER BY count DESC;

-- Most recent orders

SELECT order_id, customer_id, order_date, total_amount, status

FROM orders ORDER BY order_date DESC LIMIT 10;

-- Average items per order

SELECT ROUND(AVG(item_count), 2) AS avg_items_per_order

FROM (SELECT order_id, COUNT(*) AS item_count FROM order_items GROUP BY order_id) sub;

5.7 — Check for missing indexes (useful diagnostic)

SELECT relname AS table,

seq_scan, seq_tup_read,

idx_scan, idx_tup_fetch,

CASE WHEN seq_scan > 0

THEN ROUND(seq_tup_read::numeric / seq_scan, 0)

ELSE 0

END AS avg_rows_per_seq_scan

FROM pg_stat_user_tables

ORDER BY seq_tup_read DESC;

This shows how many sequential scans vs. index scans each table has received. After running the workload queries in the next section, revisit this to see the impact.

Step 6 — Enable pg_stat_statements

pg_stat_statements tracks execution statistics for all SQL statements. It is required for Query Performance Insight in the Azure Portal and for the Monitoring and Index Tuning sections later in the workshop. Set it up now so it collects data from the start.

- Go to Azure Portal → your PostgreSQL server → Server parameters

- Search for

shared_preload_libraries→ ensure pg_stat_statements is checked - Search for

pg_stat_statements.track→ set to ALL - Click Save — this requires a server restart

After the restart, connect from the jumpbox and create the extension:

CREATE EXTENSION IF NOT EXISTS pg_stat_statements;

Verify it is working:

SELECT calls, query FROM pg_stat_statements LIMIT 5;

Run Demo Workload — Break Things on Purpose

In this section you will run a set of intentionally unoptimised, CPU-heavy queries against the orders_demo database you restored in the previous section. The goal is to generate realistic workload that you will observe in the Monitoring section and fix in the Index Tuning Lab.

Prerequisite: You should have the

orders_demodatabase restored from the Load Data section and be connected from the jumpbox.

Connect to the database if you are not already:

psql -h <postgresql-fqdn> -U <pgadmin> -d orders_demo

Step 1 — Run CPU-Heavy Demo Queries

Important: Enable timing first so you can see how long each query takes:

\timing

These queries are intentionally unoptimised — they trigger full sequential scans, large sorts, and heavy computation. This is exactly the kind of workload you will observe in the next section — Monitoring PostgreSQL with Azure Portal.

Query 1 — Full Cross-Join Aggregation (extreme CPU)

SELECT c.city, c.country, p.category,

COUNT(DISTINCT o.order_id) AS total_orders,

SUM(oi.quantity * oi.unit_price * (1 - oi.discount/100)) AS revenue

FROM customers c

JOIN orders o ON o.customer_id = c.customer_id

JOIN order_items oi ON oi.order_id = o.order_id

JOIN products p ON p.product_id = oi.product_id

GROUP BY c.city, c.country, p.category

ORDER BY revenue DESC;

Why it is heavy: This joins all four tables (10K × 100K × 300K × 500 rows of data in the pipeline) and groups by three columns. The COUNT(DISTINCT ...) forces a sort-based deduplication for every group. With no indexes on the join columns other than primary keys, the planner must do multiple sequential scans and hash joins, then a large sort for the ORDER BY.

Query 2 — Correlated Subquery (no index, sequential scans)

SELECT c.customer_id, c.first_name, c.last_name,

(SELECT COUNT(*) FROM orders o WHERE o.customer_id = c.customer_id) AS order_count,

(SELECT COALESCE(SUM(total_amount),0) FROM orders o WHERE o.customer_id = c.customer_id) AS lifetime_value

FROM customers c

ORDER BY lifetime_value DESC

LIMIT 100;

Why it is heavy: For each of the 10,000 customers, PostgreSQL executes two subqueries against the 100,000-row orders table. Without an index on orders.customer_id, each subquery triggers a full sequential scan — resulting in ~20,000 sequential scans of the orders table in total.

Query 3 — Window Functions Over Large Dataset (memory + CPU)

SELECT order_id, customer_id, order_date, total_amount,

SUM(total_amount) OVER (PARTITION BY customer_id ORDER BY order_date) AS running_total,

ROW_NUMBER() OVER (PARTITION BY customer_id ORDER BY total_amount DESC) AS rank_by_amount,

AVG(total_amount) OVER (PARTITION BY shipping_country ORDER BY order_date ROWS BETWEEN 100 PRECEDING AND CURRENT ROW) AS moving_avg

FROM orders;

Why it is heavy: Three separate window functions each require sorting the full 100,000-row orders table by different partition/order keys. The running_total computes a cumulative sum per customer; rank_by_amount assigns a row number per customer by descending amount; moving_avg computes a sliding 101-row average across shipping countries. PostgreSQL may need to materialise intermediate sort results to temp files if work_mem is limited.

Query 4 — Heavy Text Computation + Sort (CPU + temp disk)

SELECT c.email, md5(c.email || o.order_id::TEXT) AS hash_key,

string_agg(p.product_name, ', ' ORDER BY oi.unit_price DESC) AS products_bought

FROM customers c

JOIN orders o ON o.customer_id = c.customer_id

JOIN order_items oi ON oi.order_id = o.order_id

JOIN products p ON p.product_id = oi.product_id

GROUP BY c.email, o.order_id

ORDER BY hash_key;

Why it is heavy: The md5() function computes a hash for every row in the join result (~300,000 rows). string_agg(... ORDER BY ...) sorts product names within each group by descending price. The final ORDER BY hash_key sorts all ~100,000 result groups by a computed hash — the result is essentially random, which defeats any natural ordering and forces a full sort.

Query 5 — Repeated Sequential Scan Loop (sustained CPU for ~30s+)

DO $$

BEGIN

FOR i IN 1..20 LOOP

PERFORM COUNT(*) FROM orders o

JOIN order_items oi ON oi.order_id = o.order_id

WHERE md5(o.status || oi.quantity::TEXT) LIKE '00%';

END LOOP;

END $$;

Why it is heavy: This PL/pgSQL anonymous block runs the same expensive query 20 times in a tight loop. Each iteration joins 100K orders with 300K order items, computes md5() on every joined row, and then filters by a text pattern. The LIKE '00%' filter on the hash cannot use any index, so every iteration is a full sequential scan + hash join + function evaluation. The result is sustained, constant CPU utilisation for tens of seconds.

Understanding the syntax:

| Element | What it does |

|---|---|

DO |

Executes an anonymous code block — like a one-off function you don’t need to save |

$$ ... $$ |

Dollar-quoted string delimiters — replaces single quotes so you don’t have to escape quotes inside the block |

BEGIN ... END |

Marks the start and end of the PL/pgSQL code block |

FOR i IN 1..20 LOOP |

Integer FOR loop — runs the body 20 times with i counting from 1 to 20 |

PERFORM |

Runs a SELECT but discards the result — used when you want the side effects (CPU load) but don’t need the output |

Tip: Increase the loop count to

50or100for longer sustained load:DO $$ BEGIN FOR i IN 1..100 LOOP PERFORM COUNT(*) FROM orders o JOIN order_items oi ON oi.order_id = o.order_id WHERE md5(o.status || oi.quantity::TEXT) LIKE '00%'; END LOOP; END $$;

Query 6 — Large Temp-Table Sort + Distinct (IOPS + memory pressure)

SELECT DISTINCT ON (customer_id)

customer_id, order_id, order_date, total_amount

FROM orders

ORDER BY customer_id, total_amount DESC, order_date DESC;

Why it is heavy: DISTINCT ON requires the result to be sorted by the grouping column (customer_id) and then by the tie-breaking columns (total_amount DESC, order_date DESC). This forces a full sort of all 100,000 orders. If the sort does not fit in work_mem, PostgreSQL spills to temp files on disk, creating I/O pressure.

Step 2 — Generate Maximum Load (Concurrent Execution)

For the best demonstration during monitoring exercises, run multiple queries at the same time from separate psql sessions.

Open three SSH sessions to the jumpbox, connect to orders_demo in each, then run:

| Session | Query | Why |

|---|---|---|

| Session 1 | Query 1 (cross-join aggregation) | Saturates one backend with multi-table joins |

| Session 2 | Query 3 (window functions) | Forces large sorts in another backend |

| Session 3 | Query 5 (loop × 50 or 100) | Sustained CPU from sequential scans |

Running these concurrently will show multiple active backends, high CPU utilisation, temp file usage, and sequential-scan-heavy workload in your monitoring dashboards.

Step 3 — Observe the Impact

After running the workload, check the damage:

Active queries

SELECT pid, state, query_start, LEFT(query, 80) AS query_snippet

FROM pg_stat_activity

WHERE datname = 'orders_demo' AND state != 'idle'

ORDER BY query_start;

Sequential scan statistics (revisit from the Load Data section)

SELECT relname AS table,

seq_scan, seq_tup_read,

idx_scan, idx_tup_fetch

FROM pg_stat_user_tables

ORDER BY seq_tup_read DESC;

How to read this output:

| Column | What it means | What to look for |

|---|---|---|

seq_scan |

Number of sequential (full table) scans since last stats reset | High on large tables = missing index. After the demo workload, orders should show thousands of seq_scans from Query 2 and 5 |

seq_tup_read |

Total rows read by sequential scans | The real cost indicator. Millions or billions = massive wasted I/O |

idx_scan |

Number of index scans | Should be much higher than seq_scan on large, frequently queried tables. If it is 0, no index is being used |

idx_tup_fetch |

Rows fetched via indexes | Each row was targeted — this is efficient. Compare to seq_tup_read: a large gap means most reads are wasteful full scans |

Rule of thumb: If

seq_tup_readis orders of magnitude larger thanidx_tup_fetchon a table, that table almost certainly needs an index on the columns used in WHERE/JOIN clauses. You will fix this in the Index Tuning Lab.

Temp file usage

SELECT datname, temp_files, pg_size_pretty(temp_bytes) AS temp_size

FROM pg_stat_database

WHERE datname = 'orders_demo';

How to read this output:

| Column | What it means | What to look for |

|---|---|---|

temp_files |

Number of temp files created since last stats reset | Any value > 0 means queries spilled sorts or hashes to disk because they exceeded work_mem |

temp_bytes |

Total bytes written to temp files | Large values (hundreds of MB+) indicate heavy sort/hash operations. Queries 3, 4, and 6 are the likely culprits |

Total database size

SELECT pg_size_pretty(pg_database_size('orders_demo')) AS db_size;

These statistics feed directly into the next section — Monitoring PostgreSQL with Azure Portal. Leave them as-is — do not reset the stats yet.

Monitoring PostgreSQL with Azure Portal

In the previous section you ran six heavy queries against the orders_demo database and generated concurrent load. Now you will use the Azure Portal to see exactly what those queries did to your server — CPU spikes, memory pressure, IOPS saturation, temp file spills, and long-running sessions.

By the end of this section you will be able to:

- Navigate the built-in metrics for Azure Database for PostgreSQL Flexible Server

- Correlate a metric spike to a specific query or behaviour

- Use Query Performance Insight to find the most expensive queries

- Configure an alert rule so you get notified before problems escalate

- Use diagnostic settings to send logs to Log Analytics for deeper analysis

Prerequisite: You should have run the demo workload from the Run Demo Workload section. The metrics and query stats shown here are generated by that workload. If you skipped it, go back and run at least Queries 1, 3, and 5 concurrently before continuing.

Step 1 — Open the Metrics Blade

- Go to the Azure Portal → your resource group → click your PostgreSQL Flexible Server

- In the left menu, under Monitoring, click Metrics

You will see a chart area with a dropdown to select metrics. This is Azure Monitor Metrics Explorer — it works the same way for all Azure resources, but the available metrics are specific to PostgreSQL.

Step 2 — Observe CPU Impact

2.1 — Add the CPU metric

- Click Add metric

- Metric namespace: leave default

- Metric: CPU percent

- Aggregation: Max

- Set the time range to Last 1 hour (top-right)

What to look for: You should see a clear spike corresponding to when you ran the concurrent workload (Queries 1, 3, and 5). If you ran Query 5 with a loop count of 50 or 100, you will see a sustained plateau at high CPU for the duration.

Link to the workload:

| Query | Why it caused CPU | What you see in the chart |

|---|---|---|

| Query 1 (cross-join aggregation) | Hash joins across 4 tables, COUNT(DISTINCT) forcing sort-based dedup | Sharp spike at start |

| Query 3 (window functions) | Three window functions each requiring a full sort of 100K rows | Overlapping spike with Query 1 |

| Query 5 (loop × 20–100) | Sustained sequential scans and md5() computation in a tight loop | Flat plateau at high CPU |

2.2 — Split by dimension (optional)

Click Apply splitting → split by None (Flexible Server doesn’t split CPU by backend, but this is useful for other metrics like connections).

Tip: Click the pin icon to pin this chart to a dashboard. When running workshops or demos, having a pre-built dashboard saves time.

Step 3 — Observe Memory Pressure

- Click Add metric (or start a new chart)

- Metric: Memory percent

- Aggregation: Max

What to look for: Memory spikes correlate with:

- Query 3 (window functions): PostgreSQL materialises sort results in memory. If

work_memis sufficient, you see a memory spike. If not, it spills to disk (you will see that in Step 5 instead). - Query 1 (hash joins): Each hash join builds an in-memory hash table.

Healthy range: < 75%. If you consistently see > 85%, the server SKU may be too small for the workload, or shared_buffers / work_mem are misconfigured.

Step 4 — Observe IOPS and Storage

Add these metrics to see storage behaviour:

| Metric | Aggregation | What it shows |

|---|---|---|

| Read IOPS | Max | Disk reads — high during sequential scans |

| Write IOPS | Max | Disk writes — high during sorts that spill, checkpoints |

| Read Throughput | Max | Bytes/sec read from storage |

| Write Throughput | Max | Bytes/sec written to storage |

| Storage percent | Max | How full the disk is |

Link to the workload:

| Query | Expected I/O pattern |

|---|---|

| Query 1 (cross-join) | High read IOPS — sequential scan of all 4 tables from disk |

| Query 4 (md5 + string_agg + sort) | High write IOPS — sorts spill to temp files |

| Query 5 (loop) | Sustained read IOPS — same tables scanned 20–100 times |

| Query 6 (DISTINCT ON + sort) | Spike in write IOPS if the sort exceeds work_mem |

Important: Azure Flexible Server has IOPS limits based on SKU and storage size. If your queries hit the IOPS ceiling, the server throttles I/O and queries slow down dramatically. You can see this by comparing Read IOPS against the provisioned IOPS limit shown in the Overview blade.

Step 5 — Observe Temp Files and Connections

These less obvious metrics reveal important performance details:

5.1 — Temp files

- Metric: Temp Files Size (under the PostgreSQL-specific metrics)

- Aggregation: Max

Temp files are created when PostgreSQL runs a sort or hash that exceeds work_mem. This is a direct signal that your queries are too heavy for the current work_mem setting.

Link to the workload:

- Query 3 (window functions: 3 sorts on 100K rows) — most likely temp file generator

- Query 4 (sort by computed hash) — random sort order forces spill

- Query 6 (DISTINCT ON with multi-column sort) — spills if table is larger than

work_mem

What to do about it: Increasing work_mem reduces temp file usage but increases memory consumption per-session. The trade-off is:

Higher work_mem → fewer temp files → faster sorts → more RAM per connection

Lower work_mem → more temp files → slower sorts → less RAM per connection

For 10 concurrent connections with work_mem = 64MB, PostgreSQL may use up to 640MB just for sorts.

5.2 — Active connections

- Metric: Active Connections

- Aggregation: Max

What to look for: When you ran 3 concurrent queries in Step 7 of the workload section, you should see active connections jump from 1 to 3 (or more). If connections spike beyond what you expect, it may indicate:

- Connection pooling is not configured (each app request opens a new connection)

- A connection leak (connections are opened but never closed)

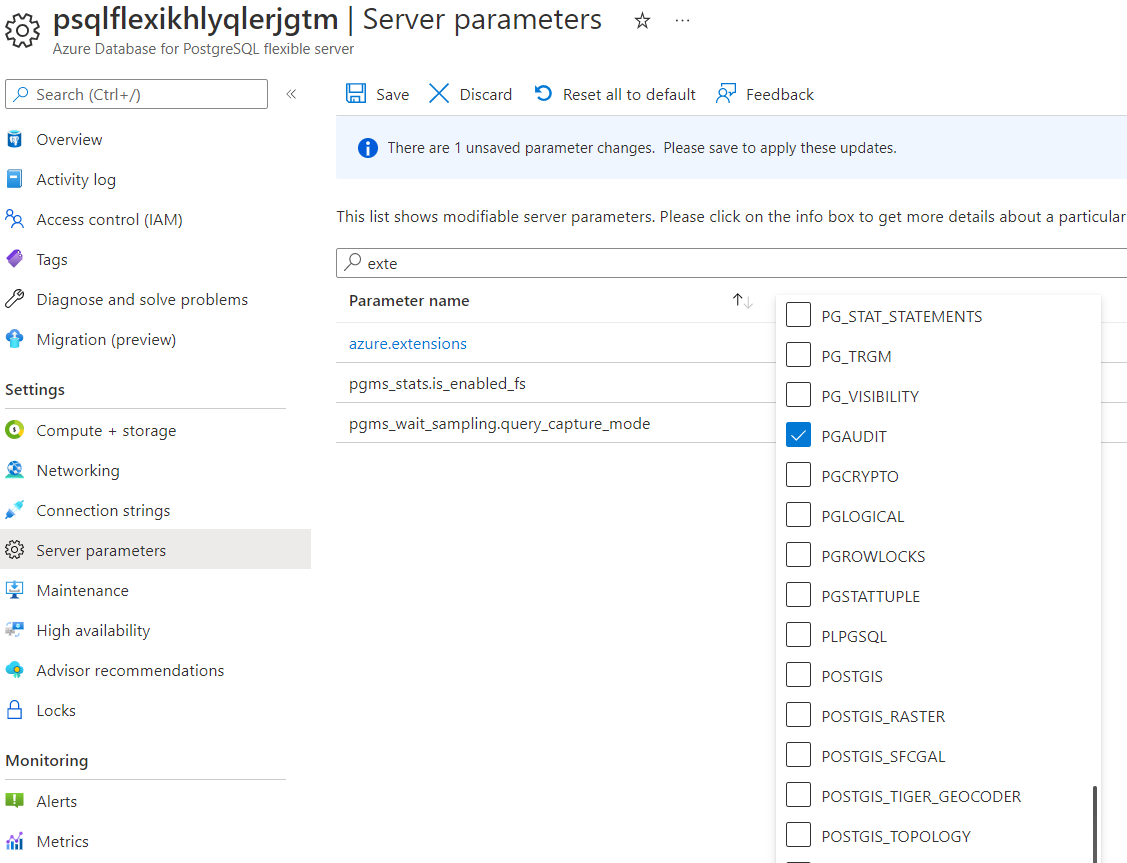

Step 6 — Enable pg_stat_statements

pg_stat_statements is a standard PostgreSQL extension that collects per-query execution statistics (calls, time, rows). It works on any PostgreSQL installation and is the foundation for query performance analysis.

- Go to Server parameters in the left menu

- Allow the extension first:

- Search for

azure.extensions - Click the dropdown — this shows all extensions available on Flexible Server

- Find and check

pg_stat_statements - Click Save (this does not require a restart)

- Search for

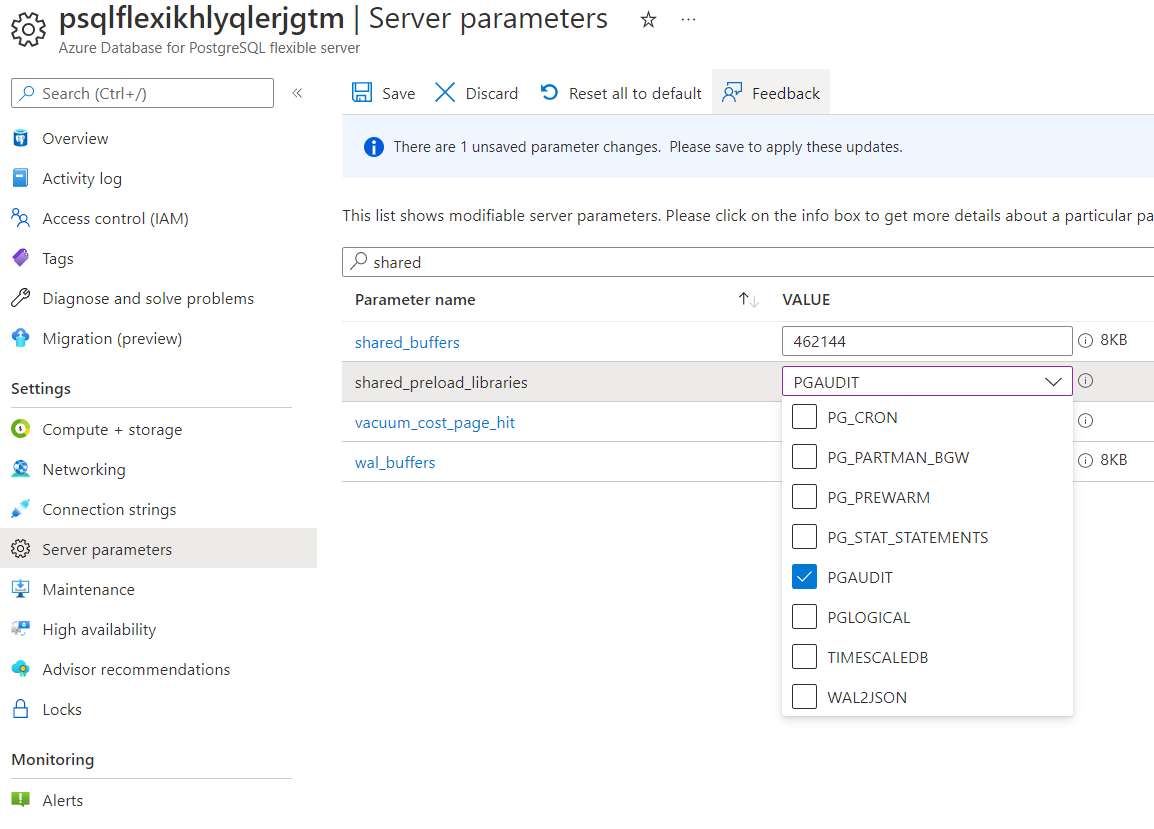

- Now enable the extension in the server:

- Search for

shared_preload_librariesand ensurepg_stat_statementsis checked - Search for

pg_stat_statements.trackand set it toALL

- Search for

- Click Save — this requires a server restart

Already done? If you enabled

pg_stat_statementsin Step 6 of the Load Data section, skip to Step 7.

After the restart, connect from the jumpbox and create the extension:

CREATE EXTENSION IF NOT EXISTS pg_stat_statements;

Verify it’s working:

SELECT calls, total_exec_time, mean_exec_time, rows, LEFT(query, 100) AS query

FROM pg_stat_statements

ORDER BY total_exec_time DESC

LIMIT 10;

You should see the demo workload queries at the top of the list.

Step 7 — Configure Diagnostic Settings and Query Store (Logs to Log Analytics)

While Metrics Explorer shows numbers, diagnostic settings capture detailed logs including individual query executions, autovacuum runs, and connection events. This is also a prerequisite for Query Performance Insight (Step 8).

This step requires both Azure Query Store (server-side data collection) and diagnostic settings (export to Log Analytics). Query Store is an Azure-specific feature — it is separate from the standard pg_stat_statements extension configured in Step 6.

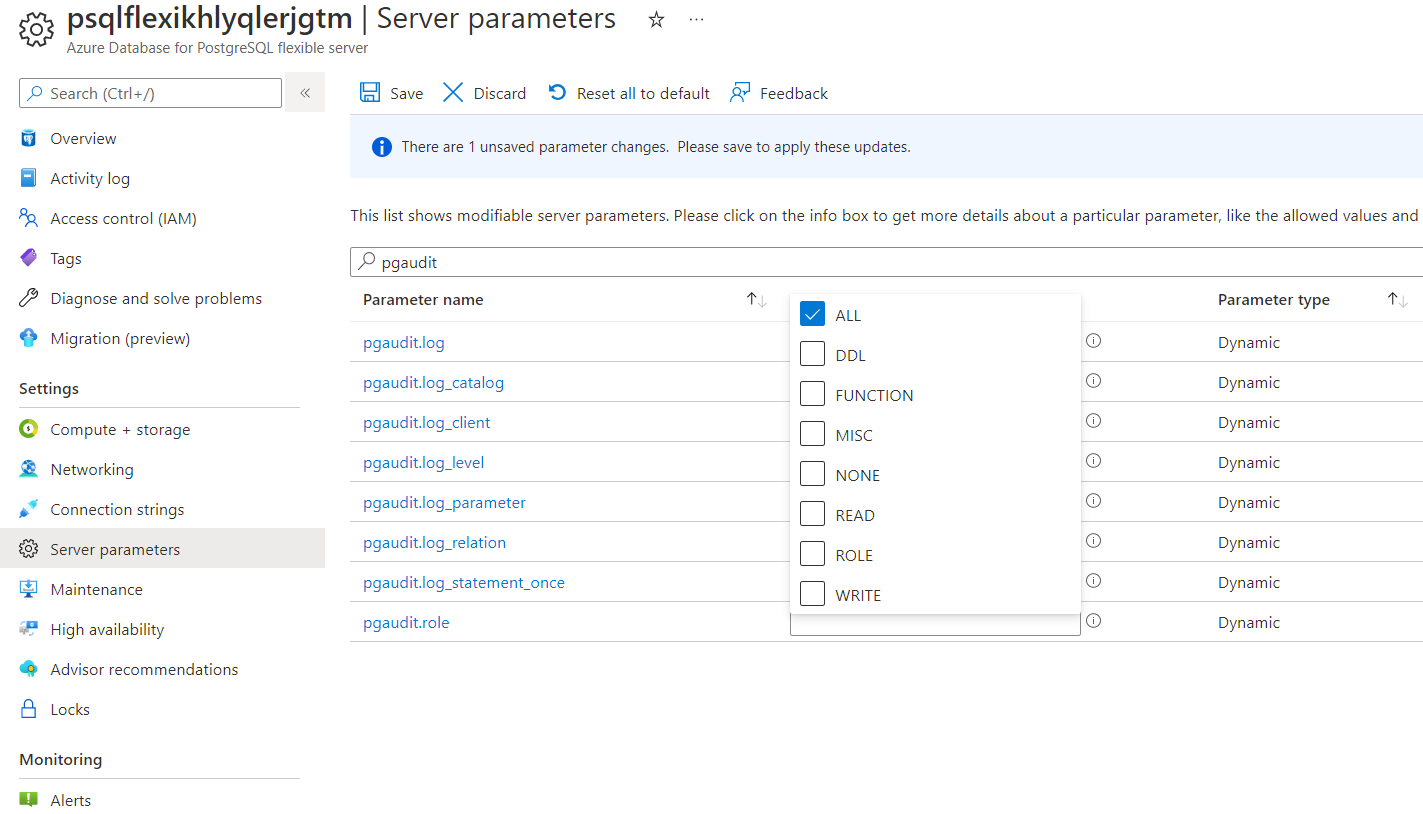

7.1 — Enable Query Store

- Go to Server parameters in the left menu

- Search for

pg_qs.query_capture_modeand set it to ALL (or TOP) - Search for

pgms_wait_sampling.query_capture_modeand set it to ALL - Click Save — this requires a server restart

| Parameter | Value | Why |

|---|---|---|

pg_qs.query_capture_mode |

ALL (or TOP) |

Enables Query Store — collects query runtime statistics that feed Query Performance Insight and the PostgreSQLQueryStoreRuntimeStatistics log category |

pgms_wait_sampling.query_capture_mode |

ALL |

Enables Query Store Wait Sampling — collects wait event data that feeds the PostgreSQLQueryStoreWaitStatistics log category |

Without these parameters, the diagnostic settings below will export empty log categories — and Query Performance Insight (Step 8) will show no data.

7.2 — Create a Log Analytics workspace (if you don’t have one)

az monitor log-analytics workspace create \

--resource-group <resource-group> \

--workspace-name pg-workshop-logs \

--location uksouth

7.3 — Enable diagnostic settings

- In the PostgreSQL server blade, click Diagnostic settings (under Monitoring)

- Click + Add diagnostic setting

- Name:

pg-logs - Check the log categories:

- PostgreSQL Sessions — connection/disconnection events

- PostgreSQL Query Store Runtime — query execution statistics

- PostgreSQL Query Store Wait Statistics — what queries waited on

- Check AllMetrics

- Destination: Send to Log Analytics workspace → select

pg-workshop-logs - Click Save

Logs take 5–10 minutes to start appearing in Log Analytics. Re-run a few demo workload queries to generate fresh data while you wait.

7.4 — Verify data is flowing

Before running any analytical queries, confirm that logs are arriving in your Log Analytics workspace. Go to your Log Analytics workspace → Logs and run:

AzureDiagnostics

| where ResourceProvider == "MICROSOFT.DBFORPOSTGRESQL"

| summarize count() by Category

You should see categories including PostgreSQLQueryStoreRuntimeStatistics, PostgreSQLQueryStoreWaitStatistics, and PostgreSQLSessions. If this returns no results, check that: (1) diagnostic settings in Step 7.2 are saved and pointing to this workspace, (2) Query Store is enabled in Step 7.1, (3) you’ve waited at least 5–10 minutes.

7.5 — Query the logs with KQL

Go to your Log Analytics workspace → Logs. Try these queries:

Slow queries (> 5 seconds):

AzureDiagnostics

| where ResourceProvider == "MICROSOFT.DBFORPOSTGRESQL"

| where Category == "PostgreSQLQueryStoreRuntimeStatistics"

| where mean_time_d > 5

| project TimeGenerated, db_name_s, query_id_d, calls_d, mean_time_d, total_time_d

| order by total_time_d desc

| take 20

Connection events:

AzureDiagnostics

| where ResourceProvider == "MICROSOFT.DBFORPOSTGRESQL"

| where Category == "PostgreSQLSessions"

| project TimeGenerated, event_s, user_s, client_ip_s, db_name_s

| order by TimeGenerated desc

| take 50

Wait events (what are queries waiting on):

AzureDiagnostics

| where ResourceProvider == "MICROSOFT.DBFORPOSTGRESQL"

| where Category == "PostgreSQLQueryStoreWaitStatistics"

| summarize total_wait_ms = sum(total_time_d * 1000) by event_s, query_id_d

| order by total_wait_ms desc

| take 20

No results? If the KQL queries return empty, verify: (1)

pg_qs.query_capture_modeis set toALLorTOPin Step 7.1, (2) diagnostic settings in Step 7.3 include the Query Store log categories, (3) you’ve waited at least 5–10 minutes after configuration.

Step 8 — Query Performance Insight

This is the most powerful built-in tool for finding slow queries without installing any extensions.

Prerequisites: Step 7 must be completed first. Query Performance Insight requires Query Store (

pg_qs.query_capture_mode), Query Store Wait Sampling (pgms_wait_sampling.query_capture_mode), and a Log Analytics workspace with diagnostic settings configured. See the official prerequisites.

- In the left menu, under Intelligent Performance, click Query Performance Insight

- You will see tabs: Long running queries, Top queries by CPU, Top queries by IO

8.1 — Long running queries

This tab shows queries that took the longest wall-clock time. After the demo workload, you should see:

| Expected query | Why it appears here |

|---|---|

| Query 5 (DO $$ loop) | Ran for 30+ seconds |

| Query 1 (cross-join aggregation) | Multi-table join with aggregation |

| Query 3 (window functions) | Three sorts on 100K rows |

Note: Query Performance Insight normalises queries — it replaces literal values with

$1,$2placeholders so identical queries with different parameters are grouped together. TheDO $$ ... $$block appears as a single query.

8.2 — Top queries by CPU

Switch to the CPU tab. This shows queries ranked by total CPU time consumed.

Expected top entries after the workload:

| Rank | Query | Why |

|---|---|---|

| 1 | Query 5 (loop) | md5() computed on 300K rows × 20–100 iterations |

| 2 | Query 1 (cross-join) | Hash joins + COUNT(DISTINCT) + final sort |

| 3 | Query 4 (md5 + string_agg) | md5() on every row + string_agg with internal sort |

8.3 — Top queries by IO

Switch to the IO tab. This ranks queries by blocks read from storage.

Expected top entries:

| Rank | Query | Why |

|---|---|---|

| 1 | Query 5 (loop) | Sequential scan of orders + order_items × 20–100 times |

| 2 | Query 2 (correlated subquery) | ~20,000 sequential scans of orders |

| 3 | Query 1 (cross-join) | Full scan of all 4 tables |

Tip: Click on any query bar to see the full query text and its execution timeline.

Step 9 — Create an Alert Rule

Set up an alert so you are notified when CPU exceeds a threshold — this simulates a production monitoring setup.

9.1 — Create the alert

- In the PostgreSQL server blade → Alerts (under Monitoring)

- Click + Create → Alert rule

- Condition:

- Signal: CPU percent

- Operator: Greater than

- Threshold: 80

- Aggregation type: Maximum

- Aggregation granularity: 5 minutes

- Frequency of evaluation: 1 minute

- Click Next: Actions

9.2 — Create an action group

- Click + Create action group

- Name:

workshop-alerts - Notification type: Email/SMS/Push/Voice

- Enter your email address

- Click Review + create → Create

9.3 — Complete the alert rule

- Alert rule name:

High CPU Alert - Severity: 2 - Warning

- Click Review + create → Create

9.4 — Test the alert

Go back to the jumpbox and re-run Query 5 with a higher loop count:

\c orders_demo

DO $$

BEGIN

FOR i IN 1..100 LOOP

PERFORM COUNT(*) FROM orders o

JOIN order_items oi ON oi.order_id = o.order_id

WHERE md5(o.status || oi.quantity::TEXT) LIKE '00%';

END LOOP;

END $$;

Within a few minutes, you should receive an email alert that CPU exceeded 80%.

Clean up: After testing, you can disable the alert rule to avoid further notifications. Go to Alerts → Alert rules → click the rule → Disable.

Step 10 — Correlating Metrics to Queries (Summary)

This is the key skill — when you see a metric spike in the portal, how do you trace it back to a specific query?

Here is how each demo query maps to the metrics you observed:

| Query | CPU | Memory | Read IOPS | Write IOPS | Temp Files | Root Cause |

|---|---|---|---|---|---|---|

| Q1 — Cross-join aggregation | High | Medium | High | Low | Low | No indexes on FK columns → sequential scans + hash joins |

| Q2 — Correlated subquery | High | Low | Very High | Low | Low | No index on orders.customer_id → 20K sequential scans |

| Q3 — Window functions | High | High | Medium | Medium–High | High | Three sorts on 100K rows → memory pressure → temp file spill |

| Q4 — md5 + string_agg | Medium | Medium | High | Medium | Medium | Per-row function evaluation + sort by computed value |

| Q5 — Loop × 20–100 | Very High | Low | Very High | Low | Low | Sustained CPU: same expensive query in tight loop |

| Q6 — DISTINCT ON + sort | Medium | Medium | Medium | Medium | Medium | Full sort of 100K rows → temp file if work_mem too low |

Step 11 — Azure Monitor Workbooks (Optional — Instructor Demo)

Azure provides pre-built Workbooks for PostgreSQL that combine multiple metrics and logs into a single dashboard.

- In the PostgreSQL blade → Workbooks (under Monitoring)

- Browse the gallery — look for:

- PostgreSQL Flexible Server Overview — CPU, memory, connections, IOPS in one view

- Query Performance — visualises

pg_stat_statementsdata over time

- Click on a workbook to open it. You can filter by time range and server instance.

When to use Workbooks vs. Metrics Explorer:

- Metrics Explorer — ad-hoc investigation, “what is happening right now”

- Workbooks — pre-built dashboards for ongoing monitoring, good for handoff to ops teams

- Query Performance Insight — developer-focused, “which query is the problem”

- Log Analytics (KQL) — deepest level, cross-correlate logs with metrics, custom alerting

Step 12 — Recommended Server Parameters for Monitoring

Before leaving this section, verify these server parameters are enabled. They control what telemetry PostgreSQL and Azure collect:

- Go to Server parameters in the portal

- Search for and verify each parameter:

Azure-Specific Parameters (Flexible Server only)

These parameters are unique to Azure Database for PostgreSQL — Flexible Server and feed data into Azure Monitor. They do not exist in community PostgreSQL.

| Parameter | Recommended value | Why |

|---|---|---|

metrics.collector_database_activity |

on |

Enables Enhanced Metrics — per-database activity counters (transactions/sec, tuples inserted/updated/deleted, session counts) in Azure Monitor. Dynamic — no restart needed |

metrics.autovacuum_diagnostics |

on |

Surfaces autovacuum performance metrics (tables processed, duration, dead-tuple removal rate) in Azure Monitor so you can track vacuum health without parsing logs |

Standard PostgreSQL Parameters

These are community PostgreSQL parameters that work on any PostgreSQL installation. They control internal statistics collection and logging.

| Parameter | Recommended value | Why |

|---|---|---|

track_io_timing |

on |

Enables I/O timing in pg_stat_activity and EXPLAIN (BUFFERS) |

track_activities |

on |

Required for pg_stat_activity to show current queries |

track_counts |

on |

Required for pg_stat_user_tables counters (vacuum, scans) |

pg_stat_statements.track |

all |

Track queries in all contexts (top-level + nested) |

log_min_duration_statement |

5000 |

Log any query taking > 5 seconds to the PostgreSQL log |

log_checkpoints |

on |

Log checkpoint start/end with timing — helps diagnose I/O spikes |

log_connections |

on |

Log each new connection — helps detect connection storms |

log_disconnections |

on |

Log disconnections with session duration |

log_temp_files |

0 |

Log every temp file creation (value is minimum size in KB; 0 = all) |

log_autovacuum_min_duration |

0 |

Log every autovacuum run — essential for vacuum monitoring |

log_lock_waits |

on |

Log when a session waits longer than deadlock_timeout for a lock |

Note: Changing

shared_preload_librariesrequires a server restart. All other parameters above can be applied dynamically.

What You Learned

In this section you:

- Observed CPU, memory, IOPS, and temp file metrics in Azure Metrics Explorer and correlated each spike to a specific demo query

- Enabled

pg_stat_statementsto collect per-query execution statistics (standard PostgreSQL) - Configured Query Store and diagnostic settings to send PostgreSQL logs to Log Analytics and wrote KQL queries to analyse them

- Used Query Performance Insight to identify the top queries by CPU consumption and I/O without needing SSH access to the server

- Created an alert rule that sends a notification when CPU exceeds a threshold

- Learned the triage workflow: Metric spike → timestamp → Query Performance Insight → EXPLAIN ANALYZE → fix → verify

These skills apply directly to production operations. The demo workload intentionally created the same problems you will see in real applications — missing indexes, sequential scans, temp file spills, and sustained CPU from poorly written queries. The next sections (Database Profiling, MVCC, Statistics & Query Planning) will teach you to diagnose and fix these problems from the PostgreSQL side.

Administration & Access Control

Managing PostgreSQL DB

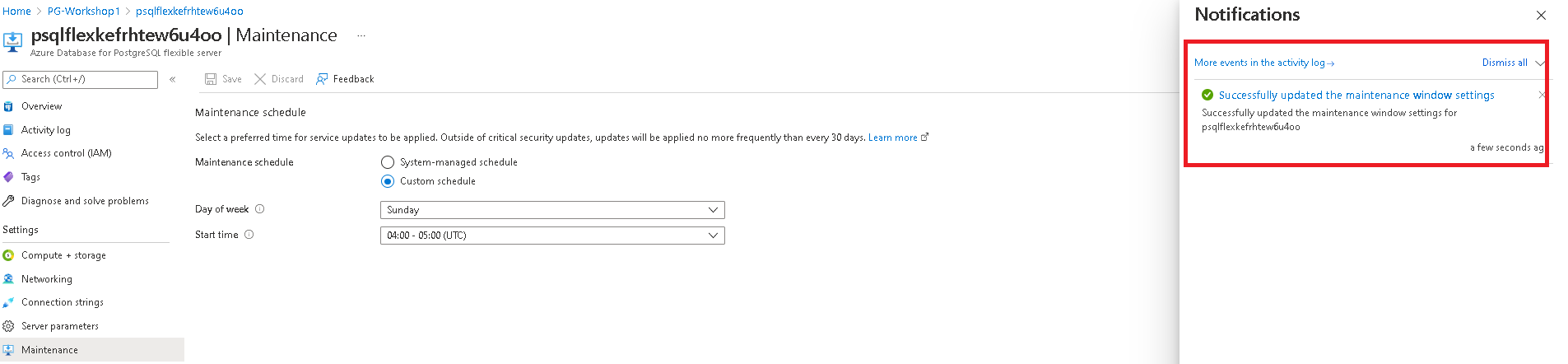

Note: The Azure Portal is updated frequently. Screenshots in this section may look slightly different from what you see, but the functionality and steps are the same — you will be able to perform all tasks described here.

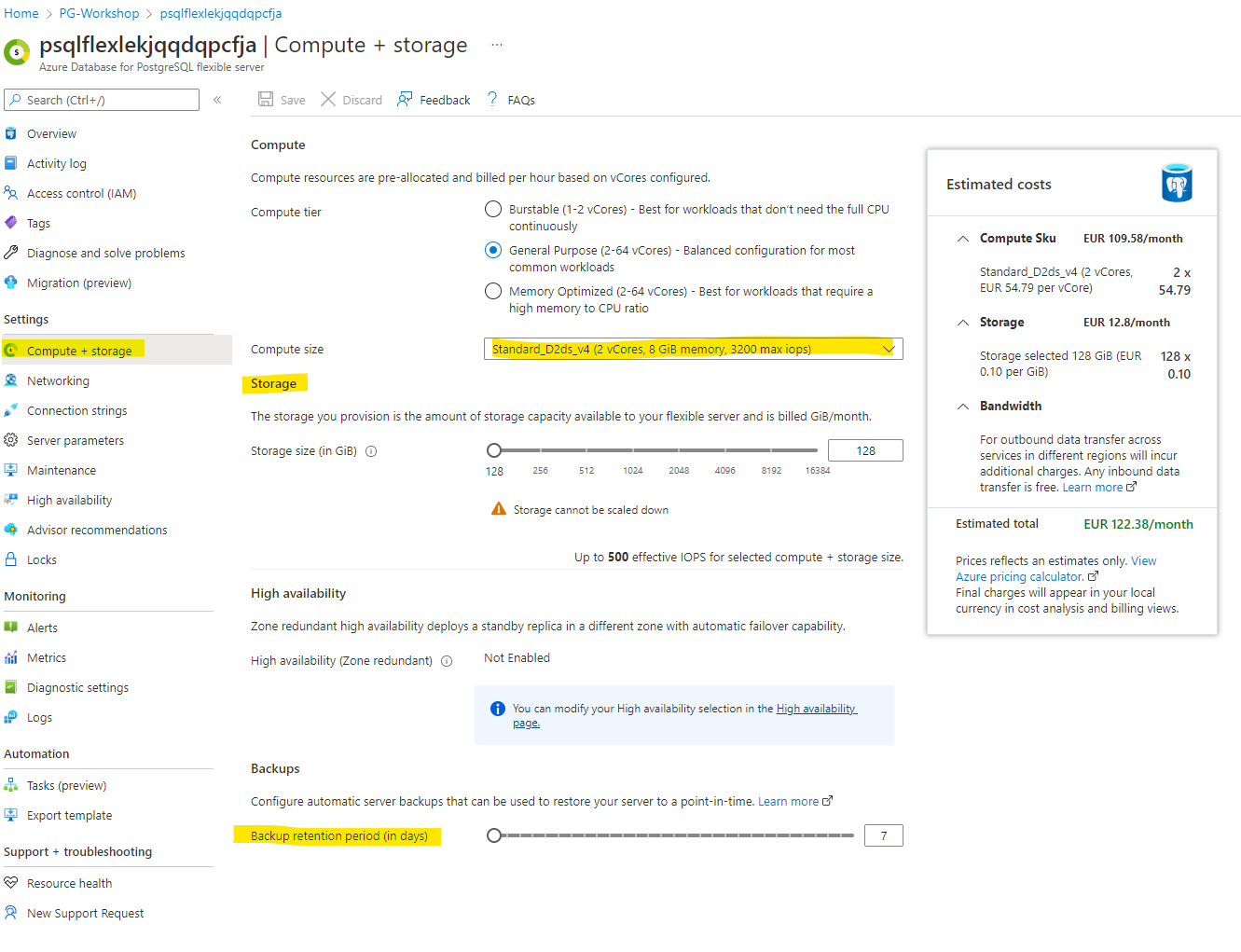

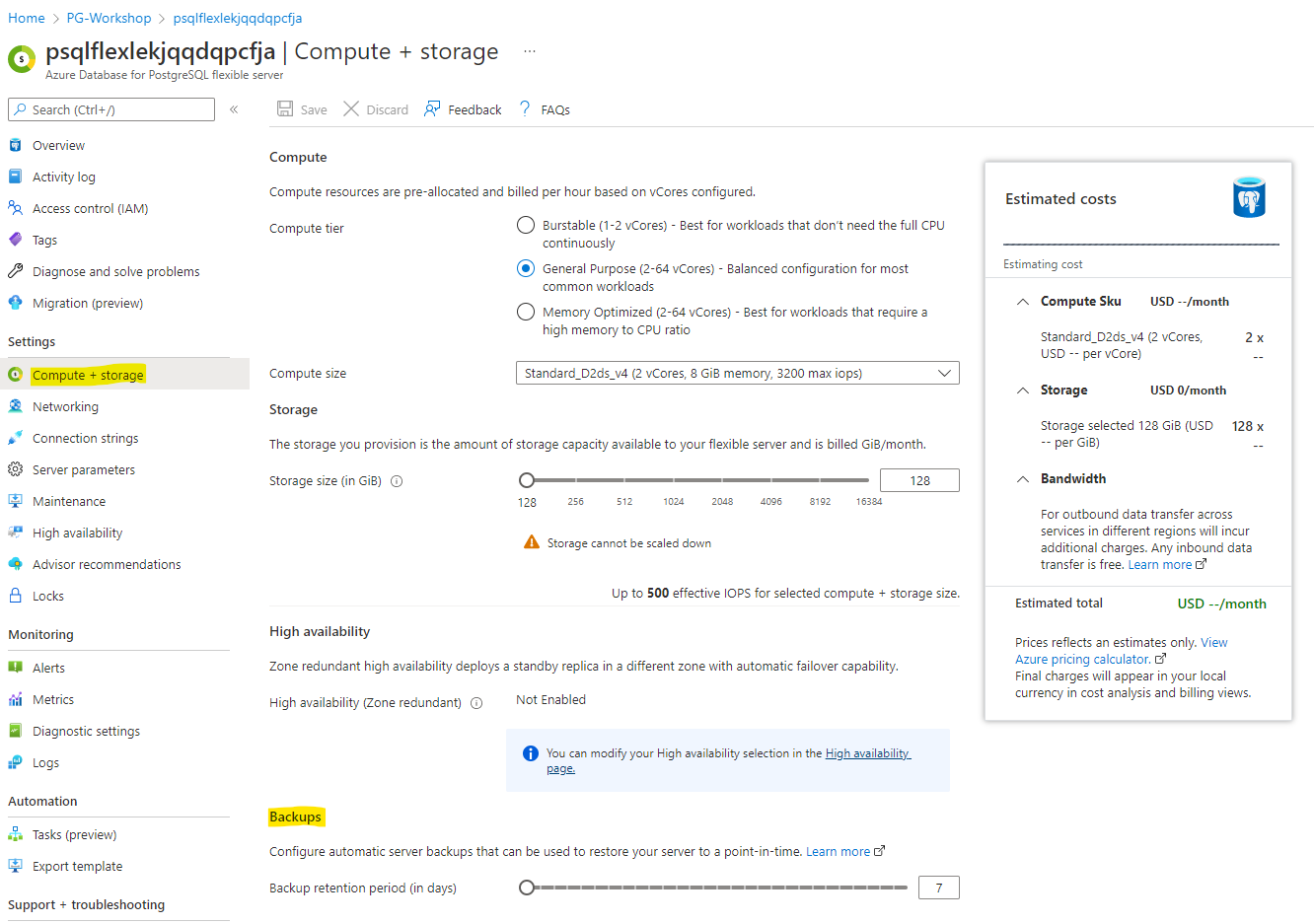

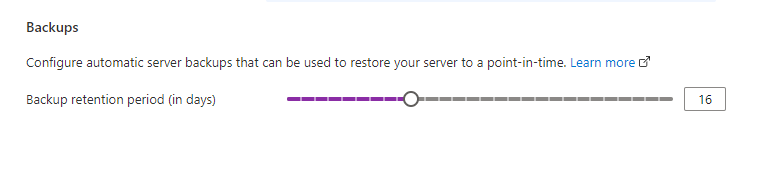

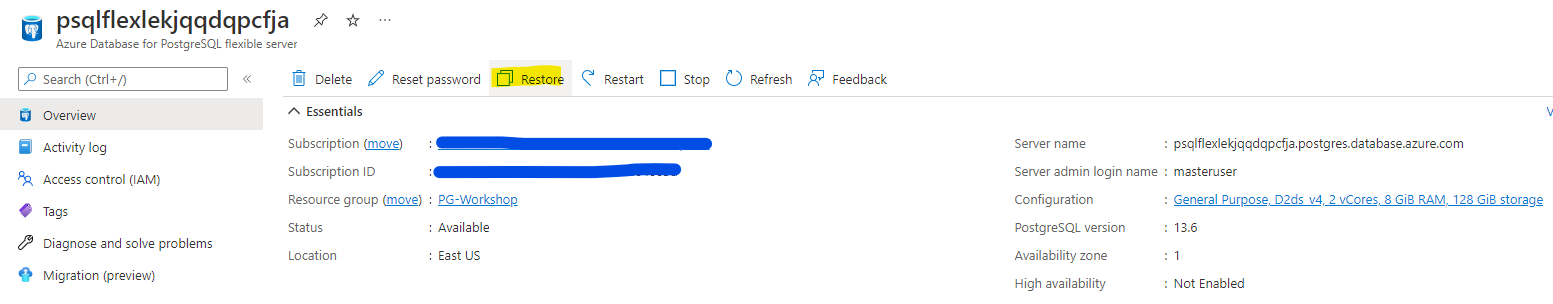

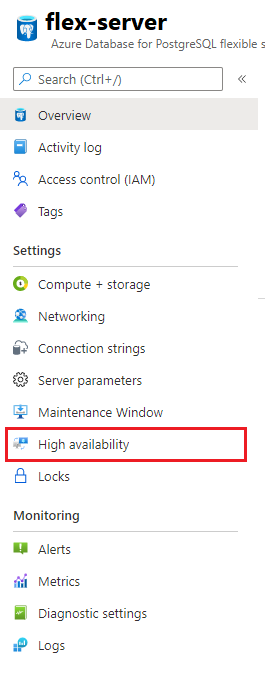

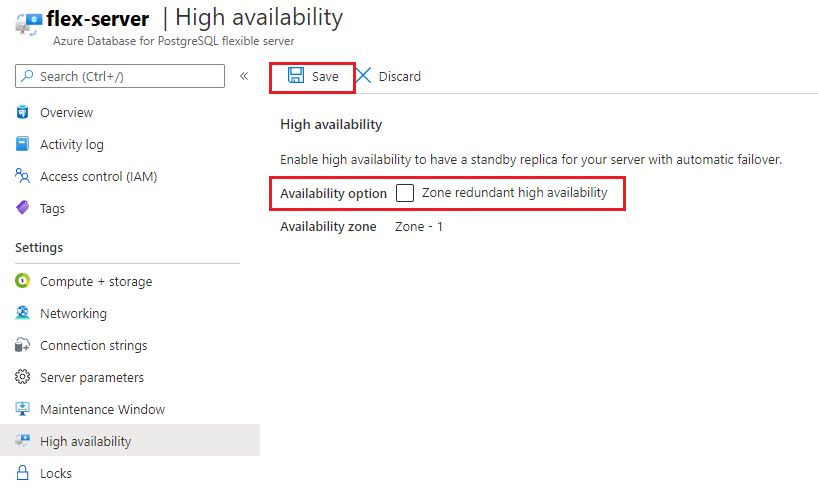

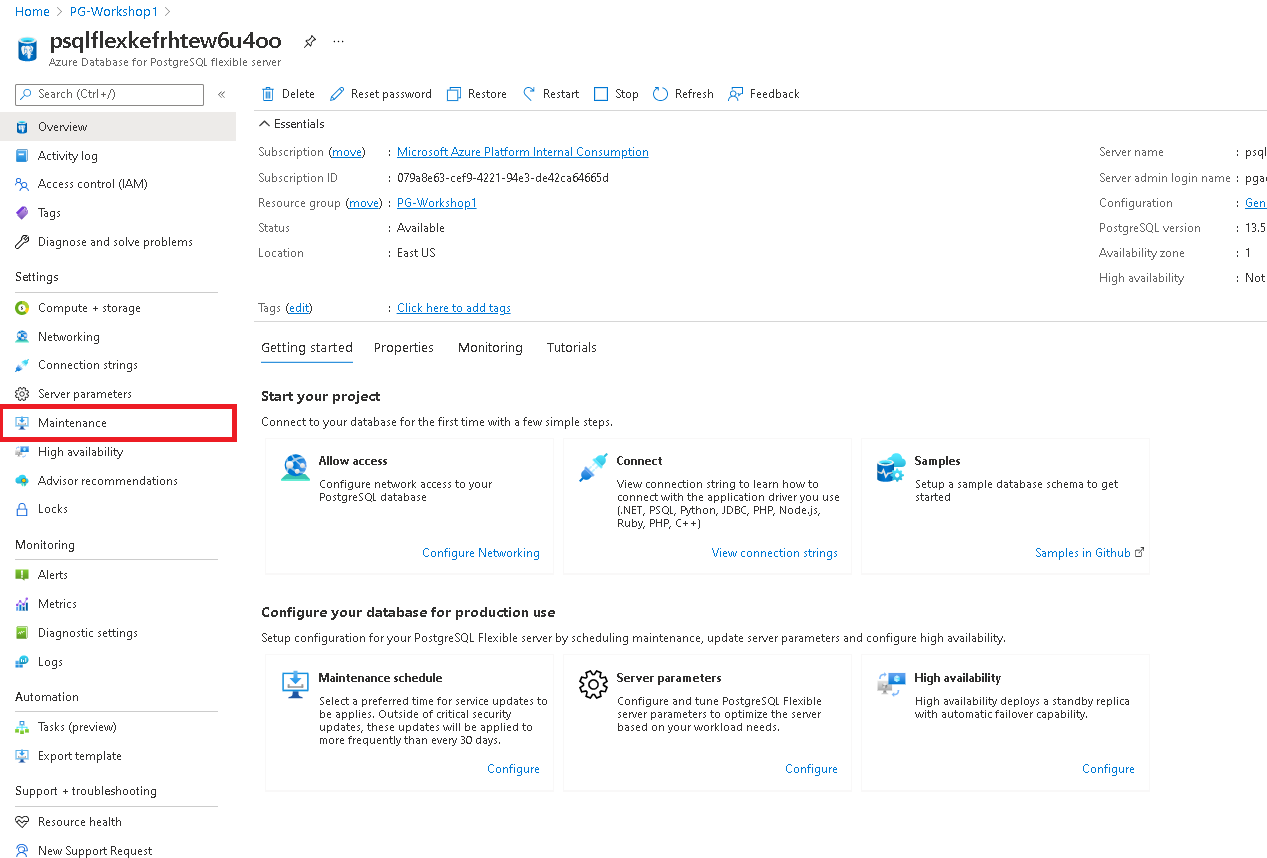

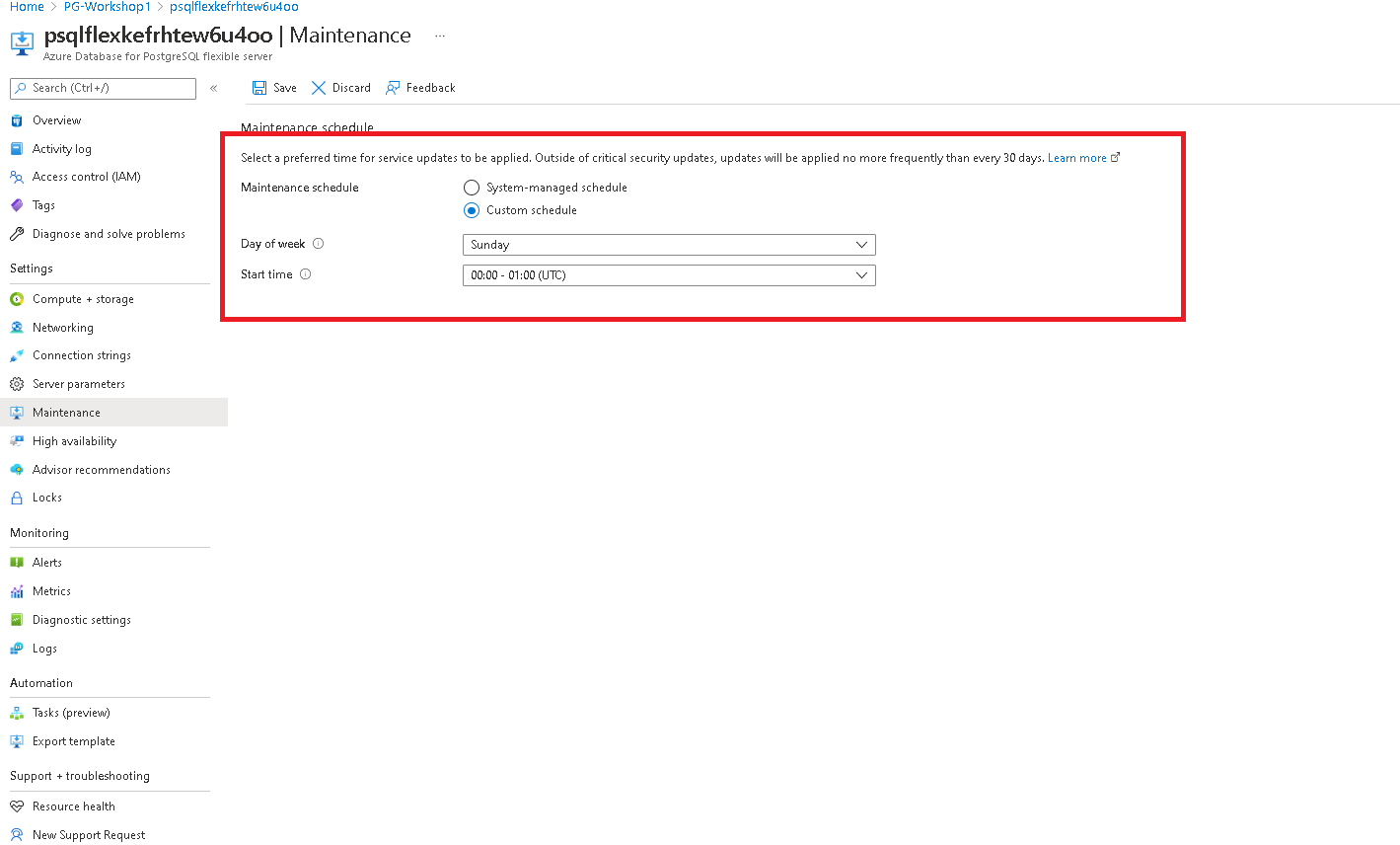

Managing Compute and Storage

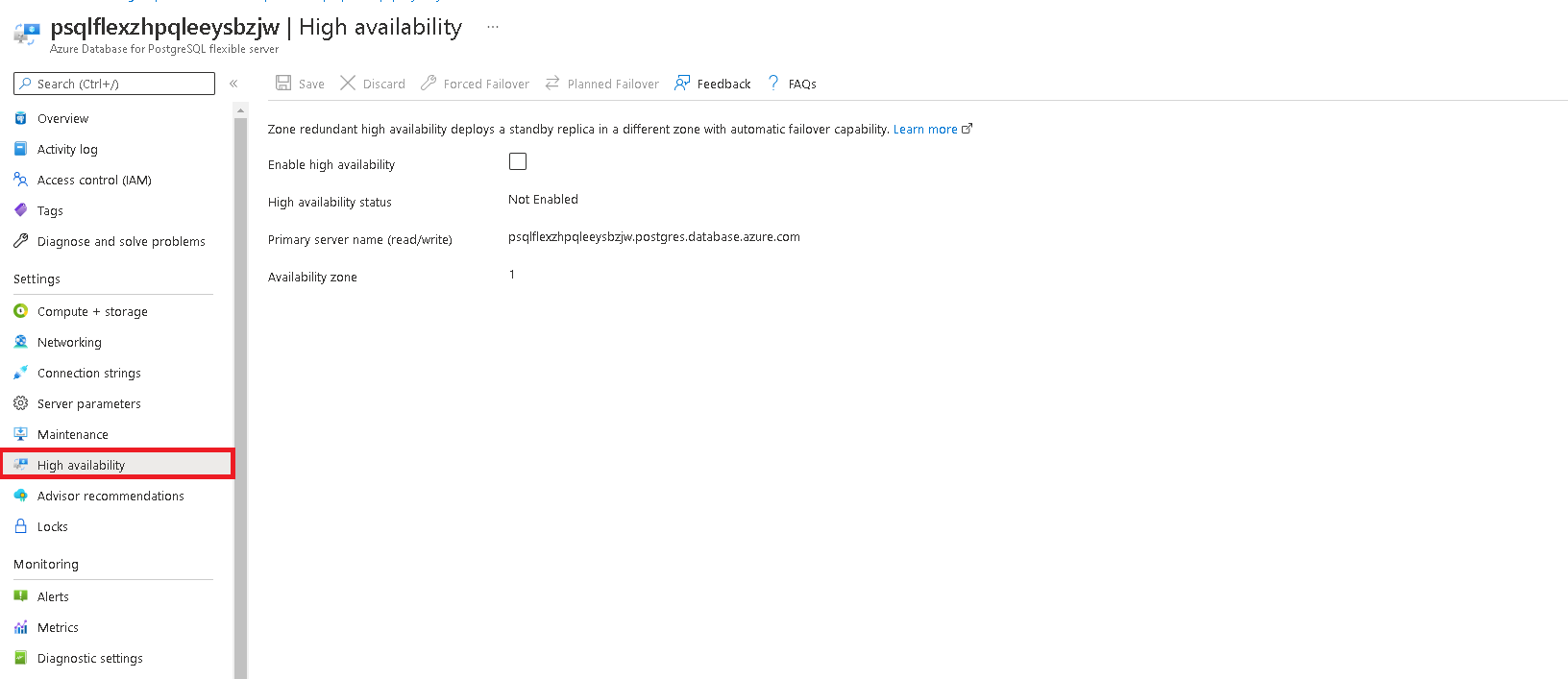

Navigate to Compute + Storage to alter storage and compute settings. You can also change the backup retention period here.

Note: Increasing the compute size may incur additional costs. Only adjust if necessary.

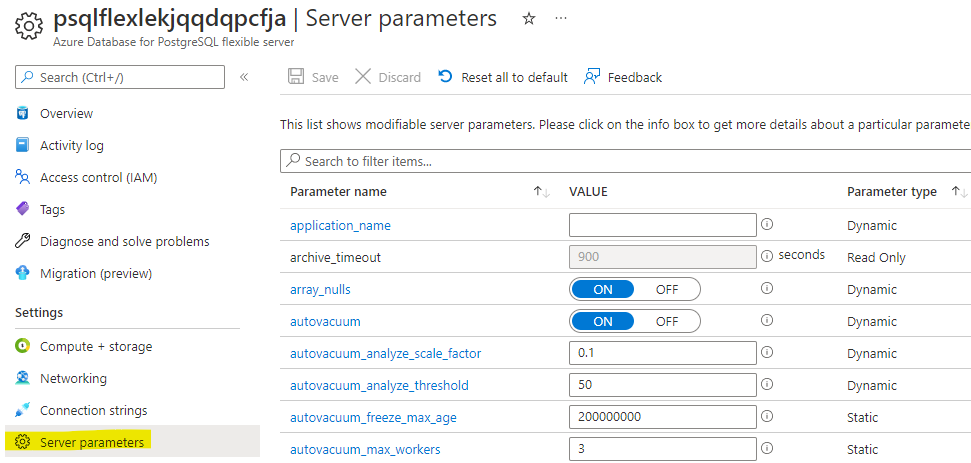

Managing Server Parameters

PostgreSQL’s behaviour is controlled by hundreds of server parameters (also called GUCs — Grand Unified Configuration). These control everything from memory allocation (shared_buffers, work_mem) to query planning (random_page_cost), logging (log_min_duration_statement), replication, autovacuum thresholds, and more.

In a self-managed PostgreSQL installation, you would edit postgresql.conf directly. On Azure Database for PostgreSQL Flexible Server, you do not have access to configuration files. Instead, you manage parameters through the Azure Portal (Server parameters blade) or via the Azure CLI / REST API.

Changes made in the portal apply as the server-level default — they affect all databases, roles, and sessions unless overridden at a lower level.

Parameter types: Dynamic, Static, and Read-Only

Not all parameters behave the same way when you change them:

| Type | Behaviour | Examples |

|---|---|---|

| Dynamic | Takes effect immediately — no restart needed | work_mem, log_min_duration_statement, statement_timeout |

| Static | Requires a server restart to take effect. The portal will prompt you to restart after saving. | shared_buffers, shared_preload_libraries, max_connections |

| Read-only | Managed by Azure — you cannot change these. They are set based on your SKU and storage tier. | max_locks_per_transaction, block_size |

Tip: In the portal, the Server parameters blade shows a note next to each parameter indicating whether it is dynamic or requires a restart.

Scope levels

You can override the server-level default at narrower scopes. Each level inherits from the one above unless explicitly overridden:

| Scope | How to set | Lifetime | Use case |

|---|---|---|---|

| Server (global default) | Azure Portal / CLI | Persists across restarts | Baseline configuration for all workloads |

| Database | ALTER DATABASE dbname SET param = value; |

Persists — applies to all sessions in that database | Different work_mem for an analytics DB vs. OLTP DB |

| Role | ALTER ROLE username SET param = value; |

Persists — applies whenever that role connects | Higher statement_timeout for a batch-processing role |

| Session | SET param = value; |

Current session only — lost on disconnect | Temporary tuning for a specific query or script |

To check the current effective value of any parameter in your session:

SHOW work_mem;

To see all non-default parameter values:

SELECT name, setting, source

FROM pg_settings

WHERE source != 'default'

ORDER BY name;

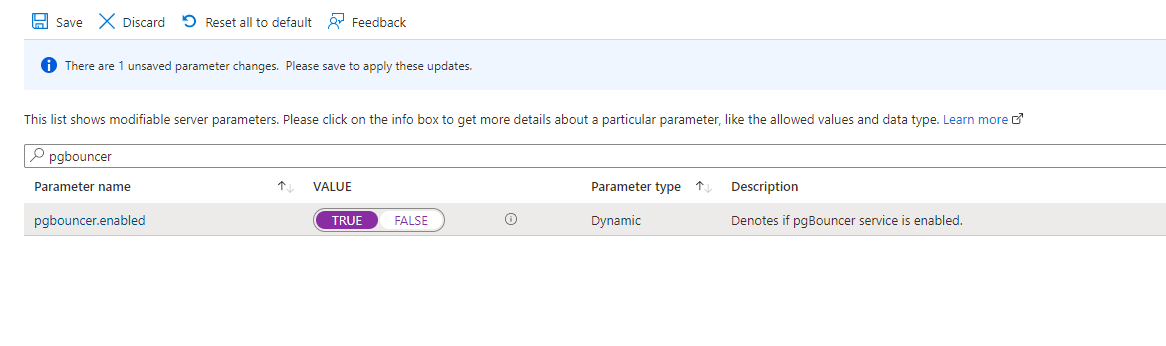

To enable PgBouncer, type pgbouncer in the search box and set its value to TRUE:

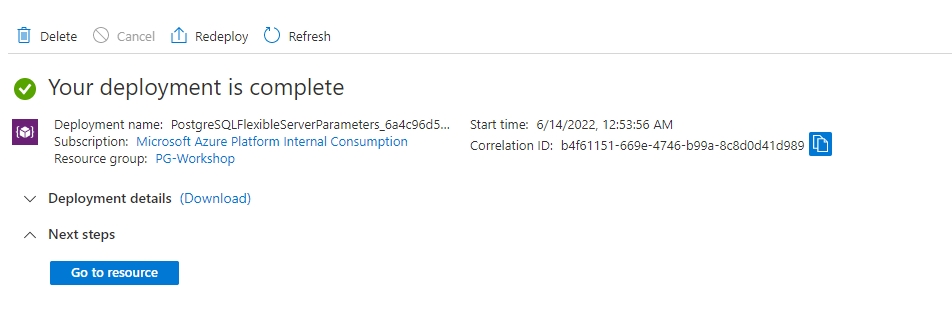

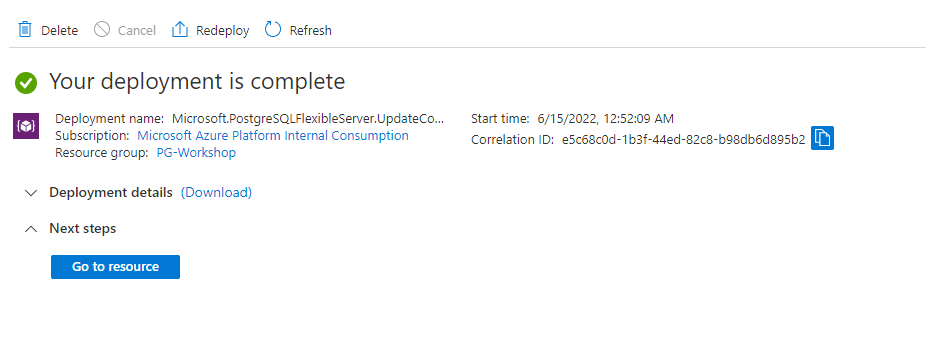

Click Save and wait for the deployment to complete successfully:

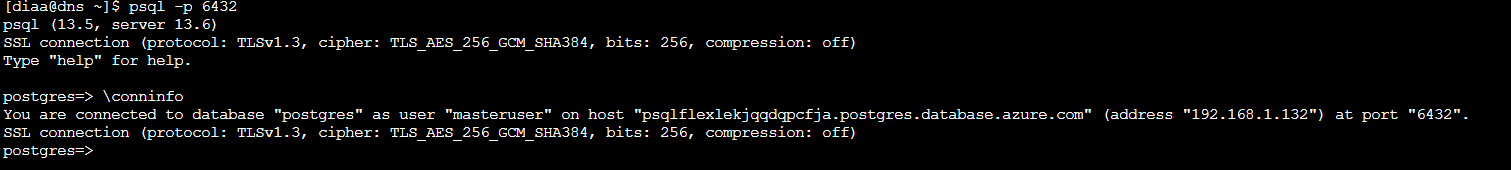

Once you see the success screen, access PostgreSQL through port 6432 on your VM:

psql -p 6432

Want to go deeper? For pool modes,

pgbenchload testing, and PgBouncer monitoring commands, see Connection Pooling with PgBouncer.

Applying Server Locks

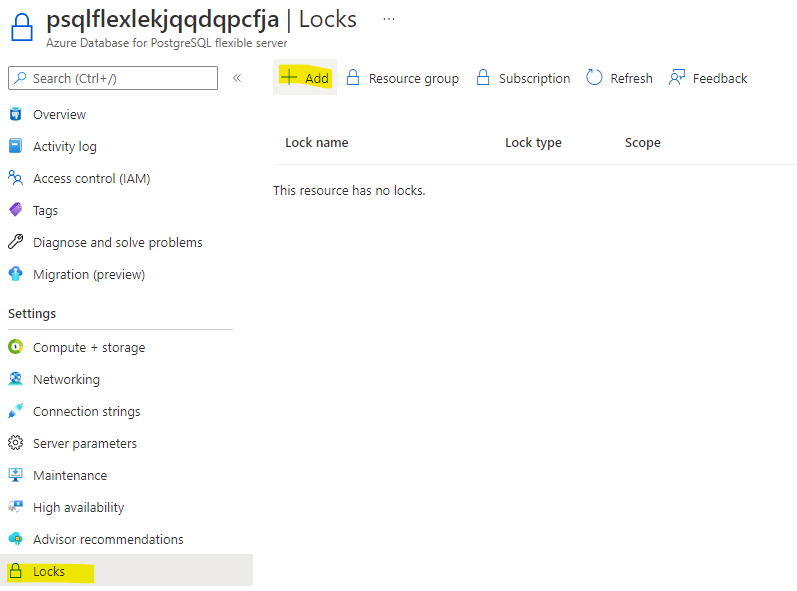

Navigate to Locks:

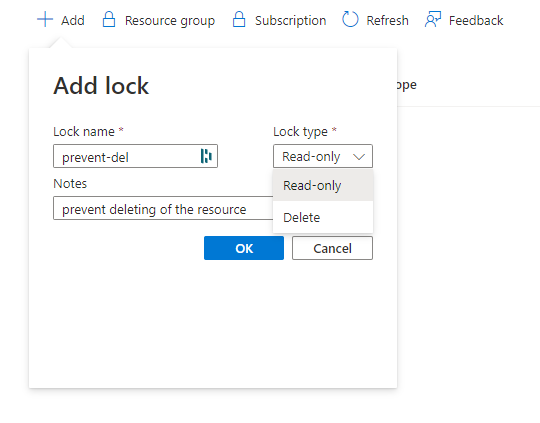

Click +Add, enter a lock name of your choice, and select the lock type Delete:

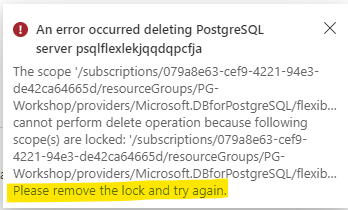

If you attempt to delete the server, you should see an error similar to the following:

Roles and Permissions

In this section you will learn how PostgreSQL manages access control through its role system — creating roles, granting privileges, understanding inheritance, and applying least-privilege principles.

Prerequisite: You should have the

orders_demodatabase restored from the Load Data section.

What You Will Build

By the end of this lab you will have built a complete access model for the orders_demo database with three different user profiles, each demonstrating a different security pattern.

Why this matters: In production, you should never give every user the admin credentials you created during deployment. Instead, create group roles that hold privileges, then assign users to those groups. This is the same principle as Active Directory groups or IAM roles — but implemented at the PostgreSQL level.

| Who | Role chain | Behaviour | Real-world scenario |

|---|---|---|---|

| dev_user | dev_user → app_team (INHERIT) |

Connects and immediately has read/write access | Application service account, backend developer |

| analyst_user | analyst_user → readonly (INHERIT) |

Connects and immediately has SELECT-only access | BI analyst, reporting dashboard, read replica user |

| contractor_user | contractor_user → app_team (NOINHERIT) |

Connects with no privileges — must SET ROLE to elevate |

External contractor, break-glass admin, audited access |

Key concepts you will practice:

- Roles are the only access object — PostgreSQL has no separate “users” and “groups.” Everything is a role.

CREATE USERis just an alias forCREATE ROLE ... LOGIN. - INHERIT vs NOINHERIT — controls whether a member automatically gets the parent role’s privileges (like a normal group) or must explicitly activate them (like

sudo). - Privilege codes — the compact letter codes (

r,a,w,d,D,x,t) that PostgreSQL uses to represent SELECT, INSERT, UPDATE, DELETE, TRUNCATE, REFERENCES, and TRIGGER. - Default privileges — ensuring future tables get the right grants automatically, not just tables that exist today.

- Schema isolation — revoking the overly permissive default on the

publicschema.

The access model is split into three paths — one per user profile:

1) app_team path (dev_user)

Value by step:

- Establishes a controlled admin starting point for governance.

- Centralizes write permissions in one reusable group role.

- Connects a real user to the role with automatic inheritance.

- Delivers immediate app productivity without manual role switching.

Real-world scenario: Application service accounts, backend developers — anyone who needs read/write access and should get it automatically on connect.

2) analyst_user path (readonly)

Value by step:

- Keeps role creation in a single trusted admin workflow.

- Encapsulates reporting permissions as SELECT-only.

- Grants analysts safe default access through inheritance.

- Prevents accidental writes while enabling BI/reporting use cases.

Real-world scenario: BI analysts, reporting dashboards, read replica users — anyone who should see data but never modify it.

3) contractor_user path (NOINHERIT)

Value by step:

- Defines a privileged role, but does not expose it by default.

- Associates contractor identity with explicit guardrails (

NOINHERIT). - Starts each session in low-privilege mode to reduce blast radius.

- Requires intentional elevation (

SET ROLE) for controlled operations. - Enables temporary write capability with full operator intent.

Real-world scenario: External contractors, break-glass admin accounts, audited access — anyone who can have elevated access but must consciously activate it each time.

Understanding Roles in PostgreSQL

PostgreSQL has a single concept for managing access: the role. There are no separate “users” and “groups” — everything is a role. The CREATE USER and CREATE GROUP commands are just convenience aliases:

| Command | What it actually does |

|---|---|

CREATE ROLE app_role; |

Creates a role that cannot login by default |

CREATE USER app_user; |

Same as CREATE ROLE app_user LOGIN; — adds login capability |

CREATE GROUP app_group; |

Same as CREATE ROLE app_group; — deprecated alias |